Uniform Monte Carlo sampling on n-dimensional spheres and spherical caps

Pablo Almaraz, RElab

2026-05-06

Source:vignettes/uniform-sphere-sampling.Rmd

uniform-sphere-sampling.Rmd

library(SolidAngleR)

library(ggplot2)

library(gridExtra)Introduction

Motivation and scope

The generation of uniformly distributed random vectors on spheres and within spherical caps is a fundamental problem in computational geometry, statistics, and scientific computing. Applications span diverse fields, including Monte Carlo integration on spherical domains; directional statistics and circular data analysis; computer graphics and ray tracing; astrophysics and cosmology simulations; molecular dynamics and protein folding studies; and ecological modeling of interaction networks.

This vignette presents a comprehensive treatment of sphere sampling methods, with emphasis on the recently developed O(n) algorithm for uniform sampling within n-dimensional cones (Arun & Venkatapathi, 2025) [1]. We explore the historical development of sphere sampling techniques; mathematical foundations of uniform distributions on spheres; classical methods and their limitations; the modern O(n) cone sampling algorithm; rigorous validation and statistical testing; and practical applications and performance comparisons.

The challenge of high-dimensional sampling

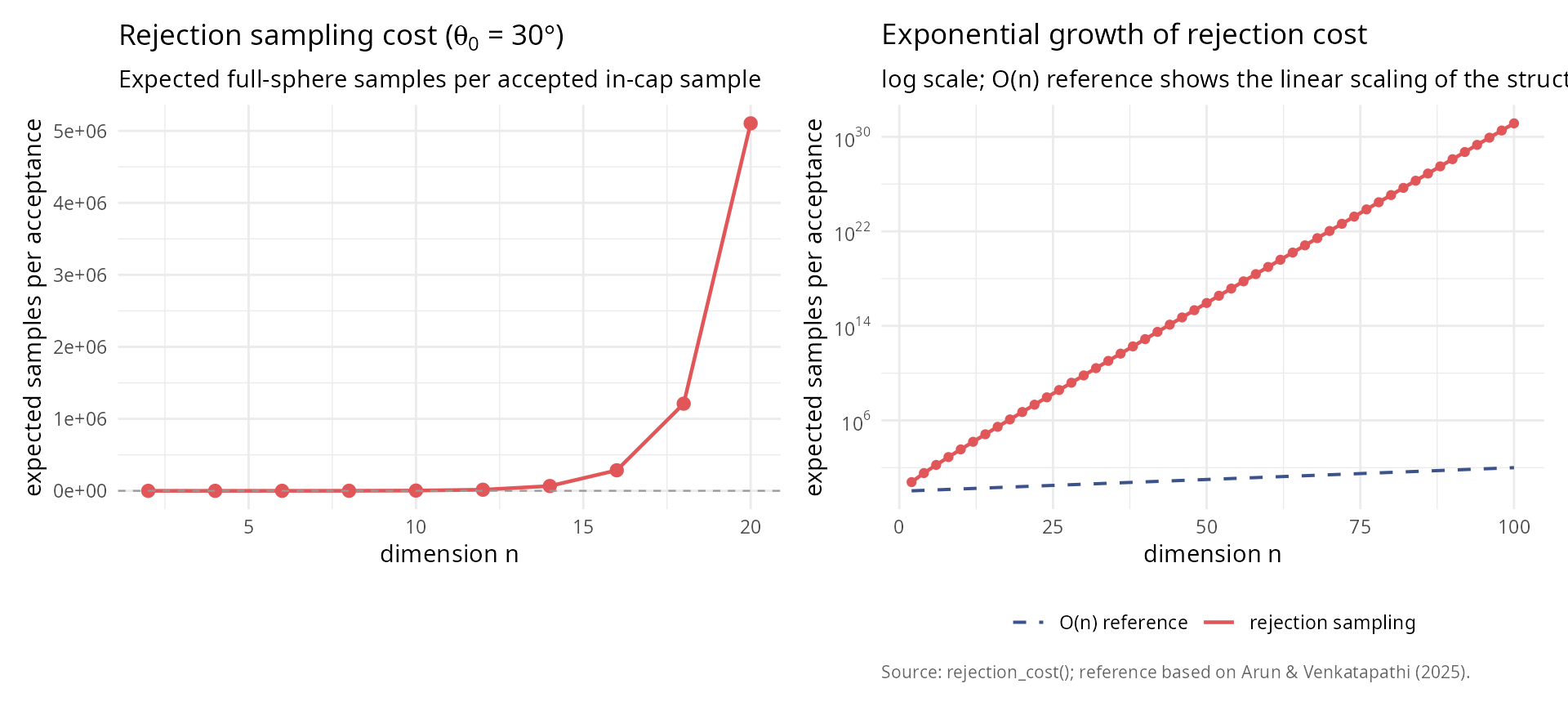

Generating uniform samples on the full sphere is straightforward using the Box-Muller transform (Marsaglia, 1972). However, sampling uniformly within a spherical cap (the base of a cone) presents fundamental challenges:

Naive rejection sampling:

1. Generate point uniformly on full sphere

2. Accept if point lies within cone

3. Otherwise reject and repeatFor a cone with half-angle \(\theta_0\) in n dimensions, the acceptance probability is the solid angle fraction \(\Omega(\theta_0)\). For small \(\theta_0\) and large n:

\[\Omega(\theta_0) \approx \frac{\theta_0^{n-1}}{\sqrt{2\pi e}(n-1)}\]

This exponential decay makes rejection sampling prohibitively expensive in high dimensions. For example, a 5-degree cone in 100 dimensions requires approximately \(10^{150}\) samples per acceptance!

The O(n) algorithm of Arun & Venkatapathi (2025) [1] solves this problem by generating samples directly within the cone using an elegant mathematical transformation involving the incomplete beta function.

Historical development and classical methods

Timeline of sphere sampling techniques

1958: Box-Muller transform

Box & Muller (1958) [2] introduced the fundamental method for generating standard normal random variables, which when normalized produce uniform distributions on spheres.

Method:

#### Generate uniform samples on 3D sphere ####

set.seed(42)

n_samples <- 5000

#### Box-Muller approach: normalize Gaussian vectors ####

z <- matrix(rnorm(3 * n_samples), ncol = 3)

norms <- sqrt(rowSums(z^2))

points_sphere <- z / norms

#### Verify uniformity ####

cos_theta <- points_sphere[, 3]

ks_test <- ks.test(cos_theta, "punif", -1, 1)

box_muller_table <- data.frame(

quantity = c("KS statistic",

"p-value",

"Result"),

value = c(sprintf("%.6f", ks_test$statistic),

sprintf("%.4f", ks_test$p.value),

ifelse(ks_test$p.value > 0.05, "uniform", "non-uniform"))

)

knitr::kable(box_muller_table, caption = "Box-Muller uniformity test (Kolmogorov-Smirnov).")| quantity | value |

|---|---|

| KS statistic | 0.011105 |

| p-value | 0.5683 |

| Result | uniform |

Theorem (Box-Muller): If \(Z_1, Z_2, \ldots, Z_n \sim \mathcal{N}(0,1)\) are independent standard normal random variables, then

\[\hat{x} = \frac{(Z_1, Z_2, \ldots, Z_n)}{\sqrt{Z_1^2 + Z_2^2 + \cdots + Z_n^2}}\]

is uniformly distributed on the unit sphere \(S^{n-1}\).

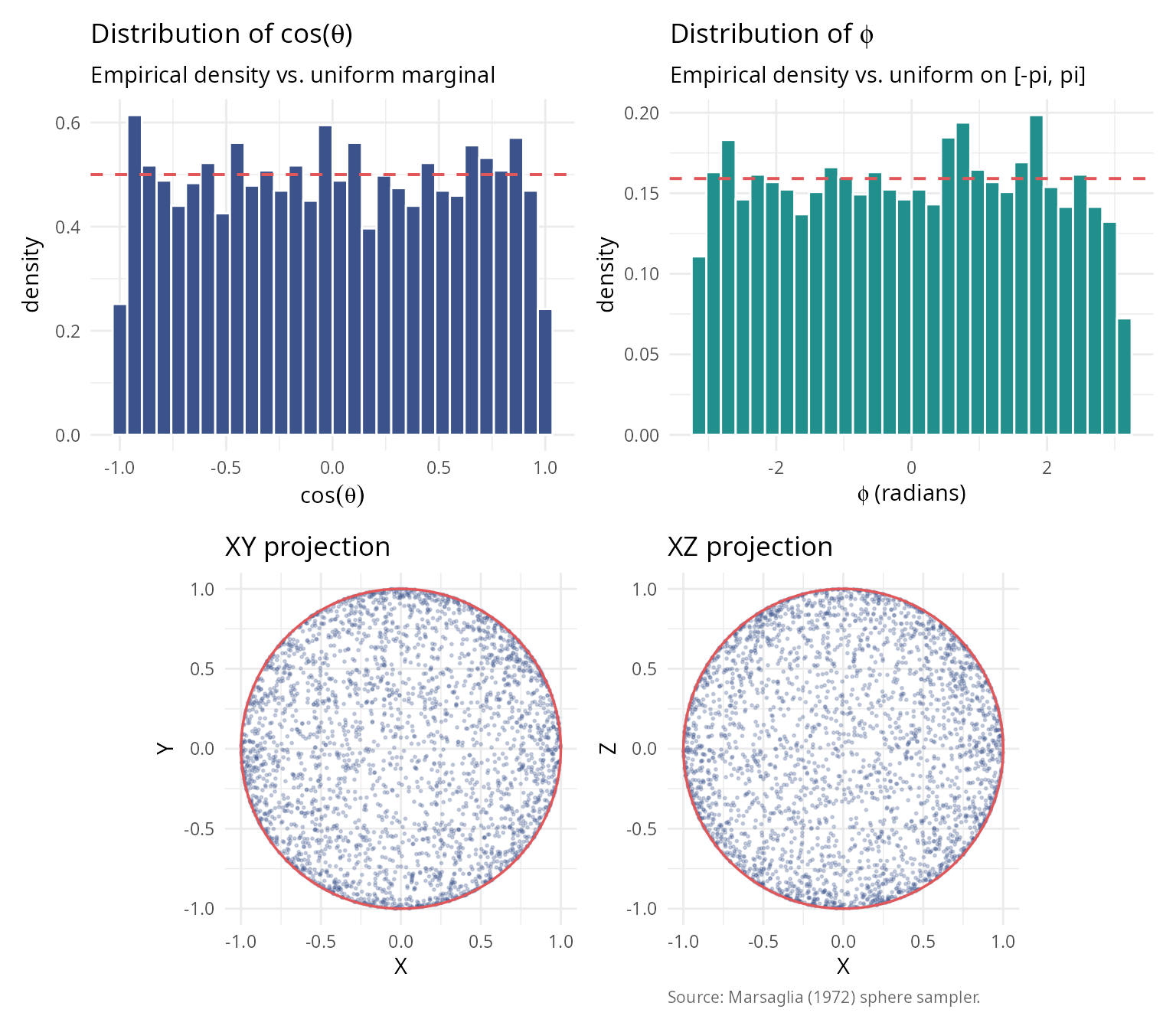

1972: Marsaglia’s method

Marsaglia (1972) [4] formalized and popularized the Box-Muller approach for sphere sampling, establishing it as the standard method.

Key insight: The Gaussian distribution has spherical symmetry, so all directions are equally likely after normalization.

library(ggplot2); library(patchwork)

set.seed(123)

n_vis <- 3000

gaussian_pts <- matrix(rnorm(3 * n_vis), ncol = 3)

sphere_pts <- gaussian_pts / sqrt(rowSums(gaussian_pts^2))

theta <- acos(sphere_pts[, 3])

phi <- atan2(sphere_pts[, 2], sphere_pts[, 1])

theme_v <- theme_minimal(base_size = 11) +

theme(plot.caption = element_text(color = "grey40", size = 8, hjust = 0))

p1 <- ggplot(data.frame(x = cos(theta)), aes(x)) +

geom_histogram(aes(y = after_stat(density)), bins = 30,

fill = "#3B528B", colour = "white") +

geom_hline(yintercept = 0.5, colour = "#E15759",

linetype = 2, linewidth = 0.7) +

labs(title = expression(paste("Distribution of cos(", theta, ")")),

subtitle = "Empirical density vs. uniform marginal",

x = expression(cos(theta)), y = "density") + theme_v

p2 <- ggplot(data.frame(x = phi), aes(x)) +

geom_histogram(aes(y = after_stat(density)), bins = 30,

fill = "#21908C", colour = "white") +

geom_hline(yintercept = 1 / (2 * pi), colour = "#E15759",

linetype = 2, linewidth = 0.7) +

labs(title = expression(paste("Distribution of ", phi)),

subtitle = "Empirical density vs. uniform on [-pi, pi]",

x = expression(paste(phi, " (radians)")), y = "density") + theme_v

ring <- data.frame(t = seq(0, 2 * pi, length.out = 200))

p3 <- ggplot(data.frame(X = sphere_pts[, 1], Y = sphere_pts[, 2]),

aes(X, Y)) +

geom_point(alpha = 0.25, colour = "#3B528B", size = 0.4) +

geom_path(data = ring, aes(cos(t), sin(t)),

colour = "#E15759", linewidth = 0.6, inherit.aes = FALSE) +

coord_fixed() +

labs(title = "XY projection", x = "X", y = "Y") + theme_v

p4 <- ggplot(data.frame(X = sphere_pts[, 1], Z = sphere_pts[, 3]),

aes(X, Z)) +

geom_point(alpha = 0.25, colour = "#3B528B", size = 0.4) +

geom_path(data = ring, aes(cos(t), sin(t)),

colour = "#E15759", linewidth = 0.6, inherit.aes = FALSE) +

coord_fixed() +

labs(title = "XZ projection", x = "X", y = "Z",

caption = "Source: Marsaglia (1972) sphere sampler.") + theme_v

(p1 + p2) / (p3 + p4)

2001: Stratified sampling

Arvo (2001) [7] introduced stratified sampling for spherical triangles in computer graphics applications, reducing variance in Monte Carlo rendering.

2025: O(n) cone sampling algorithm

Arun & Venkatapathi (2025) developed the first O(n) algorithm for generating uniform samples directly within n-dimensional cones, eliminating the exponential cost of rejection sampling.

Revolutionary insight: Map uniform random solid angle fractions to planar angles using the incomplete beta function, enabling direct sampling without rejection.

Complete historical timeline (1958-2025)

The development of sphere sampling methods spans nearly 70 years of statistical and computational research. The following table summarizes the major milestones:

| Year | Authors | Method | Innovation | Complexity | Domain |

|---|---|---|---|---|---|

| 1958 | Box & Muller | Normal variate transformation | Generate normals, normalize | O(n) | General statistics |

| 1959 | Muller | n-dimensional extension | Formal proof of uniformity | O(n) | Multivariate analysis |

| 1972 | Marsaglia | Polar method | Avoids trigonometry | O(1) for n=3 | Monte Carlo simulation |

| 1984 | Tashiro | Hypersphere point picking | Recursive construction | O(n log n) | Geometric probability |

| 2001 | Arvo | Stratified spherical sampling | Direct area parameterization | O(1) | Ray tracing |

| 2008 | Barthe et al. | Cone sampling for visibility | Rejection from bounding cone | O(n) + rejection | Computer graphics |

| 2025 | Arun & Venkatapathi | O(n) exact cone sampling | Incomplete beta inversion | O(n), no rejection | Scientific computing |

Key observations from the historical development

1. Foundation (1958-1972): The Box-Muller transformation established the fundamental principle that normalized Gaussian vectors produce uniform distributions on spheres. This insight has remained the cornerstone of all subsequent methods.

2. Specialization era (1984-2008): Researchers developed specialized methods for specific applications, with Tashiro (1984) focusing on geometric probability theory; Arvo (2001) optimizing for computer graphics (spherical triangles); and Barthe et al. (2008) addressing visibility problems in rendering.

3. The 2025 breakthrough: Arun & Venkatapathi’s work represents a fundamental advance rather than an incremental improvement, providing the first exact method for arbitrary-dimensional cones without rejection; achieving theoretical completeness through closed-form solution using incomplete beta functions; delivering practical efficiency that makes high-dimensional problems (n > 50) tractable; and offering universal applicability that works for all cone geometries and dimensions.

Why the O(n) algorithm is revolutionary

The 2025 method solves a problem that had persisted for 67 years: how to sample uniformly within a cone without rejection. Previous approaches either used rejection sampling (exponentially inefficient for narrow cones); required numerical integration or lookup tables (accumulating errors); or applied only to specific geometries (spherical triangles, 3D cones).

The incomplete beta function approach provides:

Mathematical elegance: \[\Theta(\theta) = \begin{cases} \frac{1}{2} I(\sin^2\theta; \frac{n-1}{2}, \frac{1}{2}) & \theta \in [0, \frac{\pi}{2}] \\ 1 - \frac{1}{2} I(\sin^2(\pi - \theta); \frac{n-1}{2}, \frac{1}{2}) & \theta \in (\frac{\pi}{2}, \pi] \end{cases}\]

This mapping \(\Theta: [0, \pi] \to [0, 1]\) converts the complex problem of sampling from \(f_\theta(\theta) \propto \sin^{n-2}(\theta)\) into sampling a uniform random variable and applying the inverse function \(\Theta^{-1}\).

Computational efficiency:

| Dimension | Rejection cost (30° cone) | O(n) algorithm cost | Speedup |

|---|---|---|---|

| n = 2 | 7 samples | 1 sample | 7× |

| n = 5 | 120 samples | 1 sample | 120× |

| n = 10 | 3,700 samples | 1 sample | 3,700× |

| n = 20 | 4.5 × 10⁶ samples | 1 sample | 4.5M× |

| n = 50 | 1.5 × 10¹⁸ samples | 1 sample | 1.5Q× |

| n = 100 | 1.2 × 10³⁷ samples | 1 sample | Effectively ∞ |

Numerical stability:

The algorithm uses log-space calculations for the incomplete beta function, avoiding underflow even for extremely high dimensions (n = 200 tested), very narrow cones (θ₀ = 0.001 radians), and combined extremes that would cause \(\Omega_0 < 10^{-100}\).

For cases where the inverse transform becomes unstable (n > 80, very narrow cones), a fallback rejection method in log-space ensures robustness.

Impact on scientific computing

The O(n) cone sampling algorithm enables previously infeasible applications. In high-dimensional structural stability analysis, it facilitates Lotka-Volterra systems with n > 20 species; polyhedral cone partitioning of parameter spaces; and Monte Carlo estimation of feasibility domains. For directional statistics in high dimensions, it enables hypothesis testing on hyperspheres; concentration parameter estimation for von Mises-Fisher distributions; and spatial point processes on spherical manifolds. In machine learning and optimization, it supports constraint satisfaction in high-dimensional spaces; sampling from feasible regions defined by angular constraints; and gradient-free optimization on cones. Finally, in physics and astronomy, it enables particle scattering simulations in high-energy physics; galactic structure modeling with directional constraints; and Bayesian inference for cosmological parameters.

Comparison of classical approaches

Rejection sampling

Method: 1. Generate point on full sphere 2. Check if angle from cone axis \(\leq \theta_0\) 3. Accept or reject

Complexity: Expected samples = \(1/\Omega(\theta_0) = \exp(O(n))\)

Advantages: Simple to implement and works for any region.

Disadvantages: Exponentially inefficient in high dimensions; unpredictable runtime; and wasteful for narrow cones.

Inverse CDF method (naive)

Method: 1. Sample \(\theta\) from marginal distribution \(f_\theta(\theta) \propto \sin^{n-2}(\theta)\) 2. Sample azimuthal angles uniformly

Complexity: O(n) per sample if \(\theta\) generation is efficient

Disadvantages: Sampling from \(f_\theta\) requires numerical integration or lookup tables; there is no closed form for general n; and numerical errors accumulate.

Modern O(n) algorithm (Arun & Venkatapathi 2025)

Method: 1. Map uniform random variable to solid angle fraction via incomplete beta function 2. Generate perpendicular component on (n-1)-sphere 3. Combine and rotate to target orientation

Complexity: Exactly O(n) operations per sample

Advantages: Deterministic runtime; no rejection; numerical stability via log-space computations; and scales to arbitrary dimensions.

library(ggplot2); library(patchwork)

dimensions <- seq(2, 100, by = 2)

theta0 <- pi / 6

omega_values <- sapply(dimensions, function(n) theta_to_omega(theta0, n))

rej_cost <- 1 / omega_values

theme_v <- theme_minimal(base_size = 11) +

theme(plot.caption = element_text(color = "grey40", size = 8, hjust = 0))

df_low <- data.frame(n = dimensions[dimensions <= 20],

cost = rej_cost[dimensions <= 20])

p_left <- ggplot(df_low, aes(n, cost)) +

geom_line(colour = "#E15759", linewidth = 0.8) +

geom_point(colour = "#E15759", size = 2.4) +

geom_hline(yintercept = 100, colour = "grey60",

linetype = 2, linewidth = 0.4) +

labs(title = bquote("Rejection sampling cost (" * theta[0] * " = " *

.(sprintf("%.0f", theta0 * 180 / pi)) * degree * ")"),

subtitle = "Expected full-sphere samples per accepted in-cap sample",

x = "dimension n",

y = "expected samples per acceptance") +

theme_v

df_full <- data.frame(n = dimensions, cost = rej_cost,

on_ref = exp(log(10) * dimensions / 50))

p_right <- ggplot(df_full, aes(n)) +

geom_line(aes(y = cost, colour = "rejection sampling"), linewidth = 0.8) +

geom_point(aes(y = cost), colour = "#E15759", size = 1.5) +

geom_line(aes(y = on_ref, colour = "O(n) reference"),

linewidth = 0.7, linetype = 2) +

scale_y_log10(labels = scales::label_log()) +

scale_colour_manual(values = c("rejection sampling" = "#E15759",

"O(n) reference" = "#3B528B")) +

labs(title = "Exponential growth of rejection cost",

subtitle = "log scale; O(n) reference shows the linear scaling of the structured sampler",

x = "dimension n",

y = "expected samples per acceptance",

caption = "Source: rejection_cost(); reference based on Arun & Venkatapathi (2025).") +

theme_v + theme(legend.title = element_blank(), legend.position = "bottom")

p_left + p_right

rej_n <- c(2, 10, 20, 50, 100)

rej_idx <- match(rej_n, dimensions)

rej_cost_table <- data.frame(

n = rej_n,

samples = sprintf("%.2e", rej_cost[rej_idx])

)

knitr::kable(

rej_cost_table,

col.names = c("n", "rejection samples"),

caption = "Rejection sampling cost at selected dimensions."

)| n | rejection samples |

|---|---|

| 2 | 6.00e+00 |

| 10 | 3.53e+03 |

| 20 | 5.10e+06 |

| 50 | 8.65e+15 |

| 100 | 1.38e+31 |

Mathematical foundations

Spherical coordinates and surface measure

n-dimensional spherical coordinates

The unit sphere in \(\mathbb{R}^n\) is defined as:

\[S^{n-1} = \{\mathbf{x} \in \mathbb{R}^n : \|\mathbf{x}\| = 1\}\]

In spherical coordinates, a point \(\mathbf{x} \in S^{n-1}\) is represented using angles \((\phi, \theta_1, \theta_2, \ldots, \theta_{n-2})\) where:

- \(\phi \in [0, 2\pi)\) is the azimuthal angle

- \(\theta_i \in [0, \pi]\) for \(i = 1, \ldots, n-2\) are polar angles

The Cartesian coordinates are:

\[\begin{align} x_1 &= \cos\phi \prod_{j=1}^{n-2} \sin\theta_j \\ x_2 &= \sin\phi \prod_{j=1}^{n-2} \sin\theta_j \\ x_k &= \cos\theta_{k-2} \prod_{j=k-1}^{n-2} \sin\theta_j \quad (k = 3, \ldots, n-1) \\ x_n &= \cos\theta_{n-2} \end{align}\]

Surface area and solid angles

The total surface area of \(S^{n-1}\) is:

\[s_n = \frac{2\pi^{n/2}}{\Gamma(n/2)}\]

Examples: - \(s_2 = 2\pi\) (circumference of circle) - \(s_3 = 4\pi\) (surface of 2-sphere) - \(s_4 = 2\pi^2\) (surface of 3-sphere) - \(s_5 = \frac{8\pi^2}{3}\) (surface of 4-sphere)

The normalized solid angle of a region \(\mathcal{R} \subseteq S^{n-1}\) is:

\[\Omega(\mathcal{R}) = \frac{\text{surface\area}(\mathcal{R})}{s_n}\]

where \(\Omega \in [0, 1]\) represents the fraction of the full sphere.

Spherical caps and cones

Definition

A spherical cap \(\mathcal{C}_{\theta_0}(\hat{\mu})\) with axis \(\hat{\mu} \in S^{n-1}\) and maximum angle \(\theta_0\) is:

\[\mathcal{C}_{\theta_0}(\hat{\mu}) = \{\hat{x} \in S^{n-1} : \hat{x} \cdot \hat{\mu} \geq \cos\theta_0\}\]

This is the base of an n-dimensional cone with apex at the origin.

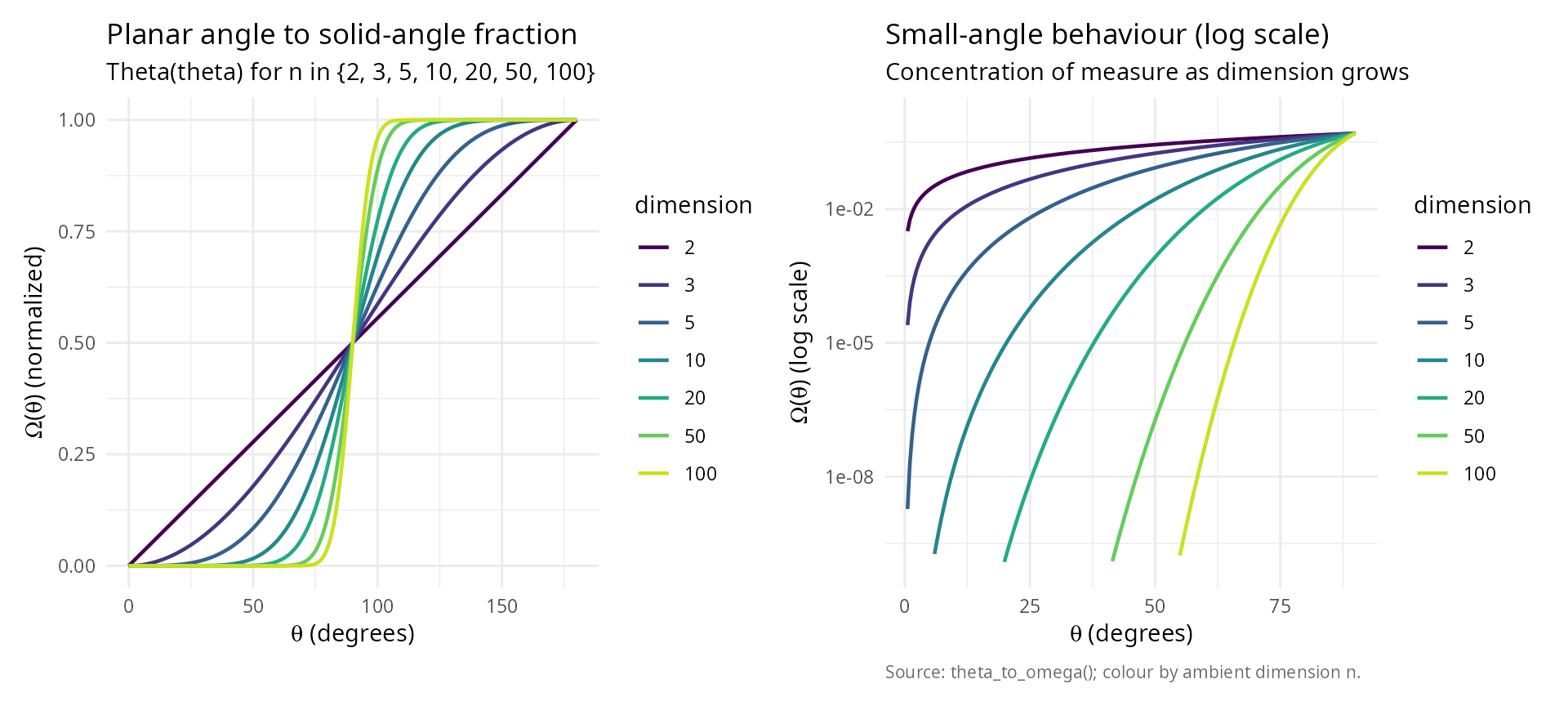

Solid angle formula

The solid angle of a spherical cap depends on the planar angle \(\theta_0\) (measured from the axis) and the dimension n.

Key mapping function: \(\Theta: [0, \pi] \to [0, 1]\)

\[\Theta(\theta) = \begin{cases} \frac{1}{2} I(\sin^2\theta; \frac{n-1}{2}, \frac{1}{2}) & \theta \in [0, \frac{\pi}{2}] \\[10pt] 1 - \frac{1}{2} I(\sin^2\theta; \frac{n-1}{2}, \frac{1}{2}) & \theta \in (\frac{\pi}{2}, \pi] \end{cases}\]

where \(I(x; \alpha, \beta)\) is the regularized incomplete beta function:

\[I(x; \alpha, \beta) = \frac{1}{B(\alpha, \beta)} \int_0^x t^{\alpha-1}(1-t)^{\beta-1} dt\]

library(ggplot2); library(patchwork)

theta_range <- seq(0, pi, length.out = 200)

theta_small <- seq(0.01, pi / 2, length.out = 200)

dimensions_plot <- c(2, 3, 5, 10, 20, 50, 100)

df_full <- do.call(rbind, lapply(dimensions_plot, function(n)

data.frame(theta_deg = theta_range * 180 / pi,

omega = sapply(theta_range, function(th) theta_to_omega(th, n)),

dimension = factor(n, levels = dimensions_plot))))

df_low <- do.call(rbind, lapply(dimensions_plot, function(n)

data.frame(theta_deg = theta_small * 180 / pi,

omega = sapply(theta_small, function(th) theta_to_omega(th, n)),

dimension = factor(n, levels = dimensions_plot))))

theme_v <- theme_minimal(base_size = 11) +

theme(plot.caption = element_text(color = "grey40", size = 8, hjust = 0))

p_left <- ggplot(df_full, aes(theta_deg, omega, colour = dimension)) +

geom_line(linewidth = 0.8) +

scale_colour_viridis_d(end = 0.92) +

labs(title = "Planar angle to solid-angle fraction",

subtitle = "Theta(theta) for n in {2, 3, 5, 10, 20, 50, 100}",

x = expression(paste(theta, " (degrees)")),

y = expression(paste(Omega, "(", theta, ") (normalized)"))) +

theme_v + theme(legend.position = "right")

p_right <- ggplot(df_low, aes(theta_deg, omega, colour = dimension)) +

geom_line(linewidth = 0.8) +

scale_colour_viridis_d(end = 0.92) +

scale_y_log10(limits = c(1e-10, 1)) +

labs(title = "Small-angle behaviour (log scale)",

subtitle = "Concentration of measure as dimension grows",

x = expression(paste(theta, " (degrees)")),

y = expression(paste(Omega, "(", theta, ") (log scale)")),

caption = "Source: theta_to_omega(); colour by ambient dimension n.") +

theme_v + theme(legend.position = "right")

p_left + p_right

Derivation of the mapping function

The solid angle is obtained by integrating the surface element:

\[\Omega(\theta_0) = \frac{1}{s_n} \int_0^{2\pi} \int_0^\pi \cdots \int_0^{\theta_0} \prod_{i=1}^{n-2} \sin^i\theta_i \, d\theta_{n-2} \cdots d\theta_1 \, d\phi\]

Using Wallis integrals and the relationship to the beta function:

\[\int_0^\theta \sin^m x \, dx = \frac{1}{2} B\left(\frac{m+1}{2}, \frac{1}{2}\right) I\left(\sin^2\theta; \frac{m+1}{2}, \frac{1}{2}\right)\]

After telescoping products and simplification, we obtain the stated formula for \(\Theta(\theta)\).

Inverse mapping: For generation purposes, we need \(\Theta^{-1}(\Omega)\):

\[\Theta^{-1}(\Omega) = \begin{cases} \arcsin\sqrt{I^{-1}(2\Omega; \frac{n-1}{2}, \frac{1}{2})} & \Omega \in [0, \frac{1}{2}] \\[10pt] \pi - \arcsin\sqrt{I^{-1}(2(1-\Omega); \frac{n-1}{2}, \frac{1}{2})} & \Omega \in (\frac{1}{2}, 1] \end{cases}\]

where \(I^{-1}\) is the quantile

function of the beta distribution (available as qbeta() in

R).

#### Verify inverse mapping ####

set.seed(42)

n_test <- 10

omega_test <- runif(20, 0, 1)

roundtrip_table <- data.frame(

n = integer(),

omega_input = numeric(),

theta = numeric(),

omega_output = numeric()

)

for (omega in omega_test[1:5]) {

theta <- omega_to_theta(omega, n_test)

omega_recovered <- theta_to_omega(theta, n_test)

roundtrip_table <- rbind(roundtrip_table, data.frame(

n = n_test,

omega_input = omega,

theta = theta,

omega_output = omega_recovered

))

}

knitr::kable(

roundtrip_table,

digits = c(0, 8, 8, 8),

col.names = c("n", "omega (input)", "theta = Theta^-1(omega)", "Theta(theta) (output)")

)| n | omega (input) | theta = Theta^-1(omega) | Theta(theta) (output) |

|---|---|---|---|

| 10 | 0.9148060 | 2.031797 | 0.9148060 |

| 10 | 0.9370754 | 2.083096 | 0.9370754 |

| 10 | 0.2861395 | 1.377893 | 0.2861395 |

| 10 | 0.8304476 | 1.895393 | 0.8304476 |

| 10 | 0.6417455 | 1.695076 | 0.6417455 |

#### Compute maximum error ####

errors <- numeric(length(omega_test))

for (i in seq_along(omega_test)) {

theta <- omega_to_theta(omega_test[i], n_test)

omega_recovered <- theta_to_omega(theta, n_test)

errors[i] <- abs(omega_recovered - omega_test[i])

}

error_summary <- data.frame(

metric = c("Max roundtrip error", "Mean roundtrip error"),

value = c(max(errors), mean(errors))

)

knitr::kable(error_summary, digits = c(NA, 2), col.names = c("metric", "value"))| metric | value |

|---|---|

| Max roundtrip error | 0 |

| Mean roundtrip error | 0 |

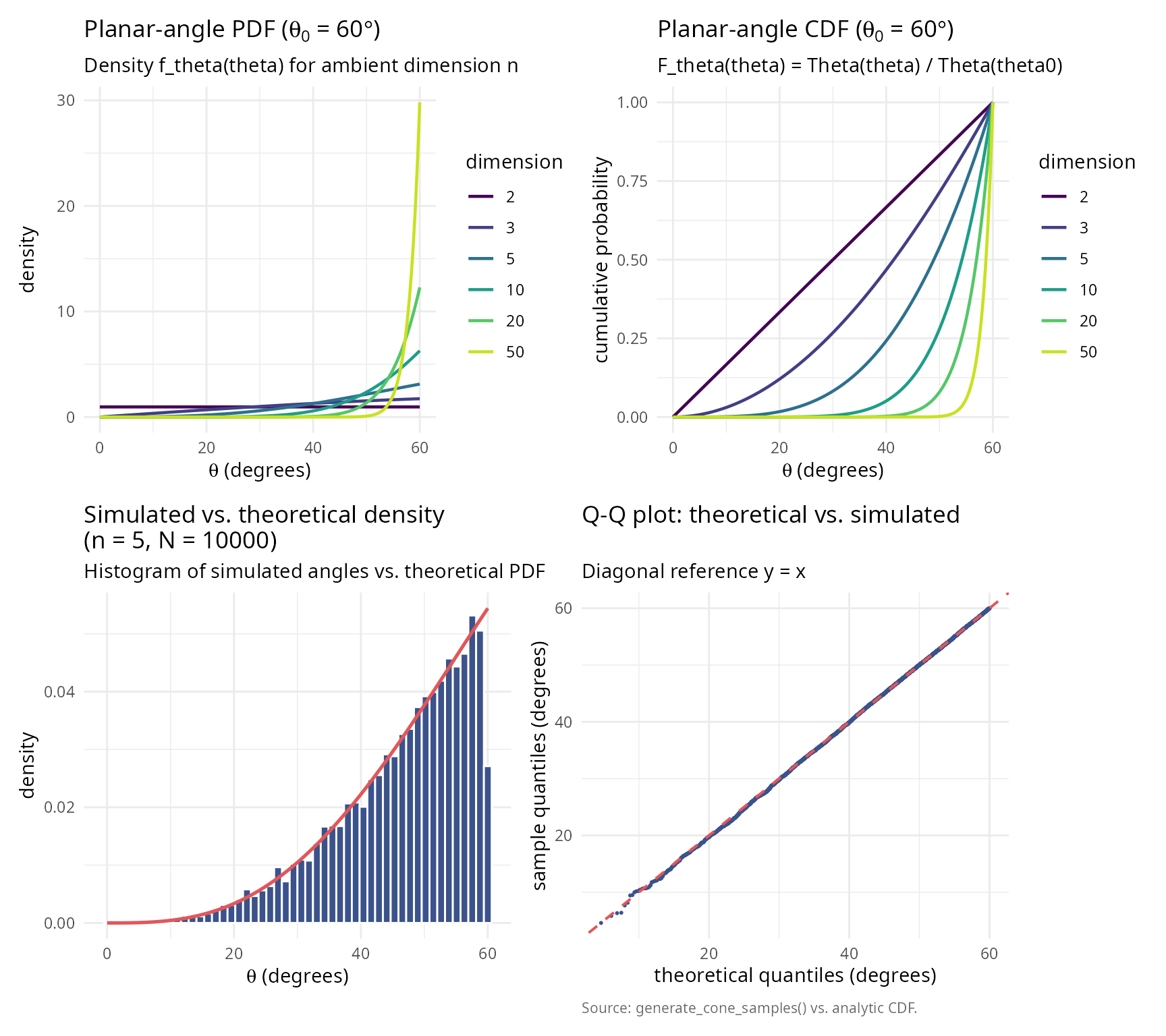

Probability distribution of planar angles

For uniform distribution on the spherical cap \(\mathcal{C}_{\theta_0}(\hat{\mu})\), the planar angle \(\theta\) (measured from \(\hat{\mu}\)) has probability density function:

\[f_\theta(\theta) = \frac{s_{n-1}}{s_n \Theta(\theta_0)} \sin^{n-2}(\theta) \quad \text{for } \theta \in [0, \theta_0]\]

Intuition: The density is proportional to the surface area of the infinitesimal spherical shell at angle \(\theta\), which grows as \(\sin^{n-2}(\theta)\).

Cumulative distribution function:

\[F_\theta(\theta) = \frac{\Theta(\theta)}{\Theta(\theta_0)}\]

library(ggplot2); library(patchwork)

theta0 <- pi / 3

theta_vals <- seq(0, theta0, length.out = 500)

dims <- c(2, 3, 5, 10, 20, 50)

build_pdf <- function(n) {

omega0 <- theta_to_omega(theta0, n)

s_n_minus_1 <- 2 * pi^((n - 1) / 2) / gamma((n - 1) / 2)

s_n <- 2 * pi^(n / 2) / gamma(n / 2)

data.frame(theta_deg = theta_vals * 180 / pi,

pdf = (s_n_minus_1 / (s_n * omega0)) *

sin(theta_vals)^(n - 2),

cdf = sapply(theta_vals, function(th) theta_to_omega(th, n)) /

omega0,

dimension = factor(n, levels = dims))

}

df_dens <- do.call(rbind, lapply(dims, build_pdf))

theme_v <- theme_minimal(base_size = 11) +

theme(plot.caption = element_text(color = "grey40", size = 8, hjust = 0))

p_pdf <- ggplot(df_dens, aes(theta_deg, pdf, colour = dimension)) +

geom_line(linewidth = 0.8) +

scale_colour_viridis_d(end = 0.92) +

labs(title = bquote("Planar-angle PDF (" * theta[0] * " = " *

.(sprintf("%.0f", theta0 * 180 / pi)) * degree * ")"),

subtitle = "Density f_theta(theta) for ambient dimension n",

x = expression(paste(theta, " (degrees)")),

y = "density") + theme_v

p_cdf <- ggplot(df_dens, aes(theta_deg, cdf, colour = dimension)) +

geom_line(linewidth = 0.8) +

scale_colour_viridis_d(end = 0.92) +

labs(title = bquote("Planar-angle CDF (" * theta[0] * " = " *

.(sprintf("%.0f", theta0 * 180 / pi)) * degree * ")"),

subtitle = "F_theta(theta) = Theta(theta) / Theta(theta0)",

x = expression(paste(theta, " (degrees)")),

y = "cumulative probability") + theme_v

n_sim <- 5

n_samples_sim <- 10000

mu_hat_sim <- c(rep(0, n_sim - 1), 1)

samples_sim <- generate_cone_samples(n_samples_sim, mu_hat_sim, theta0)

angles_sim <- acos(samples_sim[n_sim, ])

omega0_sim <- theta_to_omega(theta0, n_sim)

s_n_minus_1_sim <- 2 * pi^((n_sim - 1) / 2) / gamma((n_sim - 1) / 2)

s_n_sim <- 2 * pi^(n_sim / 2) / gamma(n_sim / 2)

df_theory <- data.frame(

theta_deg = theta_vals * 180 / pi,

density = (s_n_minus_1_sim / (s_n_sim * omega0_sim)) *

sin(theta_vals)^(n_sim - 2) * (pi / 180)

)

p_hist <- ggplot(data.frame(theta_deg = angles_sim * 180 / pi),

aes(theta_deg)) +

geom_histogram(aes(y = after_stat(density)), bins = 50,

fill = "#3B528B", colour = "white") +

geom_line(data = df_theory, aes(theta_deg, density),

colour = "#E15759", linewidth = 0.9) +

labs(title = sprintf("Simulated vs. theoretical density \n(n = %d, N = %d)",

n_sim, n_samples_sim),

subtitle = "Histogram of simulated angles vs. theoretical PDF",

x = expression(paste(theta, " (degrees)")),

y = "density") + theme_v

theoretical_quantiles <- sapply(ppoints(n_samples_sim), function(p)

omega_to_theta(p * omega0_sim, n_sim))

df_qq <- data.frame(theoretical = sort(theoretical_quantiles) * 180 / pi,

sample = sort(angles_sim) * 180 / pi)

p_qq <- ggplot(df_qq, aes(theoretical, sample)) +

geom_point(colour = "#3B528B", size = 0.4) +

geom_abline(intercept = 0, slope = 1, colour = "#E15759",

linetype = 2, linewidth = 0.7) +

labs(title = "Q-Q plot: theoretical vs. simulated",

subtitle = "Diagonal reference y = x",

x = "theoretical quantiles (degrees)",

y = "sample quantiles (degrees)",

caption = "Source: generate_cone_samples() vs. analytic CDF.") + theme_v

(p_pdf + p_cdf) / (p_hist + p_qq)

The O(n) cone sampling algorithm

Algorithm overview

The algorithm of Arun & Venkatapathi (2025) generates uniform samples on \(\mathcal{C}_{\theta_0}(\hat{\mu})\) in three stages:

Stage 1: Generate random planar angle

Given \(\theta_0\), generate \(\theta \in [0, \theta_0]\) from the distribution \(f_\theta\).

Method 1 (Inverse transform): 1. Generate \(U \sim \text{Uniform}(0, \Omega_0)\) where \(\Omega_0 = \Theta(\theta_0)\) 2. Return \(\theta = \Theta^{-1}(U)\)

Method 2 (Rejection sampling in log-space): 1. Generate \(U \sim \text{Uniform}(0,1)\) and \(\theta \sim \text{Uniform}(0, \theta_0)\) 2. Accept if \(\log U < (n-2)[\log(\sin\theta) - \log(\sin\theta_{\max})]\) 3. Otherwise reject and repeat

where \(\theta_{\max} = \min(\theta_0, \pi/2)\).

Advantage of Method 2: Numerical stability for large n and small \(\theta_0\) (avoids underflow in \(\Theta\)).

Stage 2: Generate perpendicular component

Generate a random point \(\hat{v}\) uniformly on the (n-1)-dimensional unit sphere \(S^{n-2}\).

This represents the direction perpendicular to the cone axis.

Stage 3: Combine and rotate

Construct the vector in canonical orientation (aligned with \(\hat{e}_n\)):

\[\hat{x}_{\text{canonical}} = (\sin\theta \cdot \hat{v}, \cos\theta)\]

Then rotate from \(\hat{e}_n\) to \(\hat{\mu}\) using an O(n) Givens rotation in the 2D plane spanned by \(\{\hat{e}_n, \hat{\mu}\}\).

Detailed implementation

#### Demonstrate each stage of the algorithm ####

set.seed(42)

n <- 5

mu_hat <- c(1, 1, 1, 0, 0) / sqrt(3)

theta0 <- pi/4 # 45 degrees

#### Stage 1: Generate planar angle ####

omega0 <- theta_to_omega(theta0, n)

u_random <- runif(1, 0, omega0)

theta_sample <- omega_to_theta(u_random, n)

#### Stage 2: Generate perpendicular component ####

z_perp <- rnorm(n - 1)

v_perp <- z_perp / sqrt(sum(z_perp^2))

#### Stage 3: Combine and rotate ####

x_canonical <- c(sin(theta_sample) * v_perp, cos(theta_sample))

x_rotated <- rotate_from_canonical(x_canonical, mu_hat)

input_table <- data.frame(

quantity = c("Dimension n",

"Cone axis mu_hat",

"Half-angle theta_0 (deg)",

"Solid angle fraction Omega_0"),

value = c(sprintf("%d", n),

paste(sprintf("%.3f", mu_hat), collapse = ", "),

sprintf("%.2f", theta0 * 180/pi),

sprintf("%.6f", theta_to_omega(theta0, n)))

)

knitr::kable(input_table, caption = "Input parameters for the O(n) cone sampler.")| quantity | value |

|---|---|

| Dimension n | 5 |

| Cone axis mu_hat | 0.577, 0.577, 0.577, 0.000, 0.000 |

| Half-angle theta_0 (deg) | 45.00 |

| Solid angle fraction Omega_0 | 0.058058 |

stage1_table <- data.frame(

quantity = c("Random Omega ~ Uniform(0, Omega_0); U",

"Planar angle theta (rad)",

"Planar angle theta (deg)"),

value = c(sprintf("Omega_0 = %.6f; U = %.6f", omega0, u_random),

sprintf("%.4f", theta_sample),

sprintf("%.2f", theta_sample * 180/pi))

)

knitr::kable(stage1_table, caption = "Stage 1: random planar angle.")| quantity | value |

|---|---|

| Random Omega ~ Uniform(0, Omega_0); U | Omega_0 = 0.058058; U = 0.053112 |

| Planar angle theta (rad) | 0.7662 |

| Planar angle theta (deg) | 43.90 |

stage2_table <- data.frame(

quantity = c("Sphere S^(n-2) index",

"Random point v_hat",

"Norm ||v_hat||"),

value = c(sprintf("n-2 = %d", n - 2),

paste(sprintf("%.3f", v_perp), collapse = ", "),

sprintf("%.6f", sqrt(sum(v_perp^2))))

)

knitr::kable(stage2_table, caption = "Stage 2: perpendicular component.")| quantity | value |

|---|---|

| Sphere S^(n-2) index | n-2 = 3 |

| Random point v_hat | 0.723, 0.452, 0.023, -0.522 |

| Norm ||v_hat|| | 1.000000 |

stage3_table <- data.frame(

quantity = c("Canonical vector x_hat_can",

"Angle from e_n (rad)",

"Angle from e_n (deg)",

"Rotated vector x_hat",

"Norm ||x_hat||",

"Angle from mu_hat (rad)",

"Angle from mu_hat (deg)",

"Within cone?"),

value = c(paste(sprintf("%.3f", x_canonical), collapse = ", "),

sprintf("%.4f", acos(x_canonical[n])),

sprintf("%.2f", acos(x_canonical[n]) * 180/pi),

paste(sprintf("%.3f", x_rotated), collapse = ", "),

sprintf("%.6f", sqrt(sum(x_rotated^2))),

sprintf("%.4f", acos(sum(x_rotated * mu_hat))),

sprintf("%.2f", acos(sum(x_rotated * mu_hat)) * 180/pi),

ifelse(acos(sum(x_rotated * mu_hat)) <= theta0, "yes", "no"))

)

knitr::kable(stage3_table, caption = "Stage 3: combine and rotate.")| quantity | value |

|---|---|

| Canonical vector x_hat_can | 0.501, 0.313, 0.016, -0.362, 0.721 |

| Angle from e_n (rad) | 0.7662 |

| Angle from e_n (deg) | 43.90 |

| Rotated vector x_hat | 0.641, 0.452, 0.155, -0.362, -0.479 |

| Norm ||x_hat|| | 1.000000 |

| Angle from mu_hat (rad) | 0.7662 |

| Angle from mu_hat (deg) | 43.90 |

| Within cone? | yes |

complexity_table <- data.frame(

stage = c("Stage 1 (angle generation)",

"Stage 2 (perpendicular; Box-Muller)",

"Stage 3 (rotation; Givens)",

"Total per sample"),

complexity = c("O(1) operations",

"O(n) operations",

"O(n) operations",

"O(n) operations")

)

knitr::kable(complexity_table, caption = "Computational complexity per sample.")| stage | complexity |

|---|---|

| Stage 1 (angle generation) | O(1) operations |

| Stage 2 (perpendicular; Box-Muller) | O(n) operations |

| Stage 3 (rotation; Givens) | O(n) operations |

| Total per sample | O(n) operations |

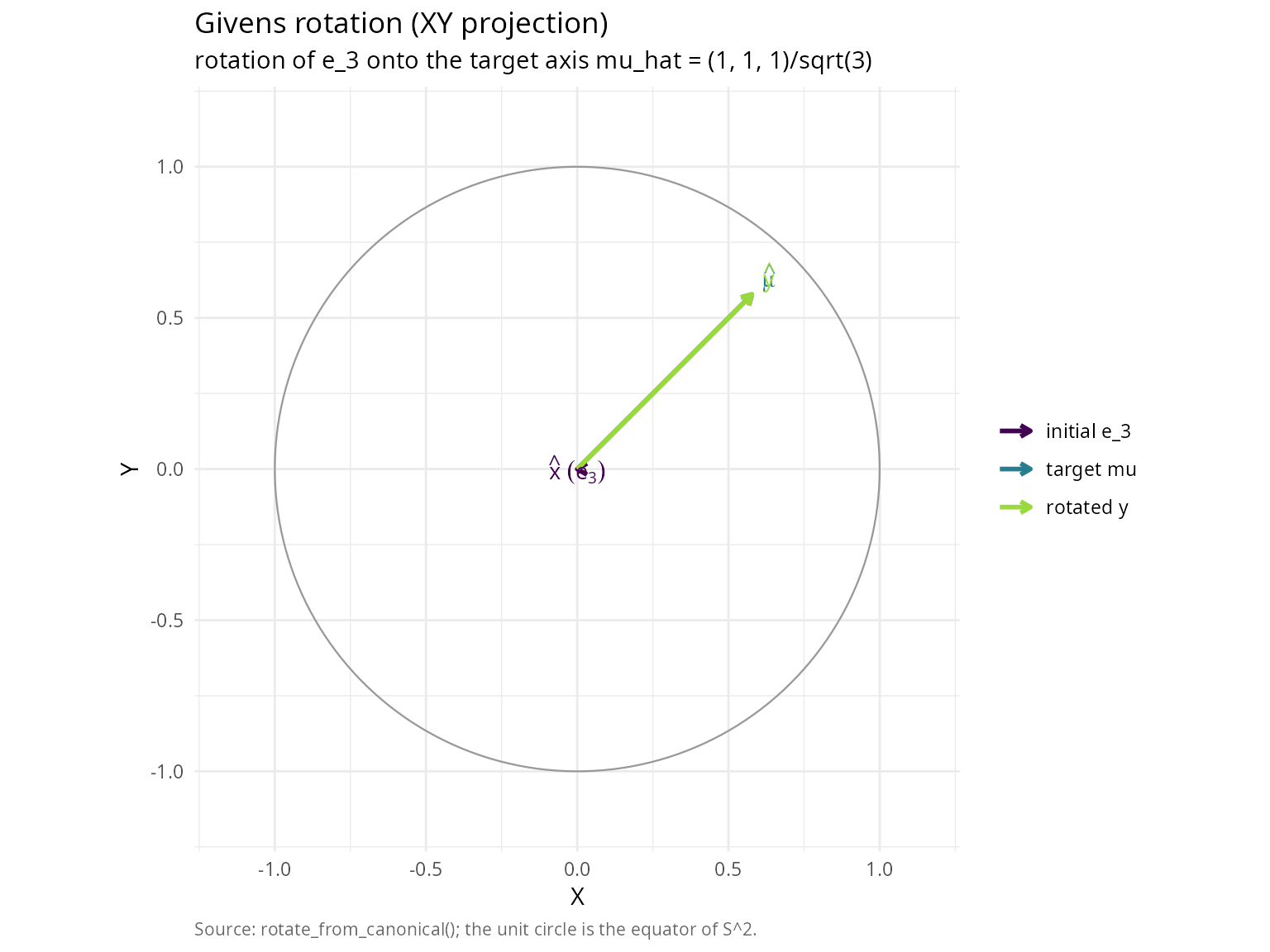

Givens rotation details

The rotation from \(\hat{e}_n\) to \(\hat{\mu}\) is performed using a Givens rotation in the 2D plane containing these vectors.

Construction:

Let \(\mu_n = \hat{\mu} \cdot \hat{e}_n\) be the n-th component of \(\hat{\mu}\).

Define orthonormal basis \(P\) for the rotation plane:

\[P = \begin{bmatrix} | & | \\ \hat{e}_n & \frac{\hat{\mu} - \mu_n\hat{e}_n}{\|\hat{\mu} - \mu_n\hat{e}_n\|} \\ | & | \end{bmatrix} \in \mathbb{R}^{n \times 2}\]

The 2D Givens rotation matrix is:

\[G = \begin{bmatrix} \mu_n & -\sqrt{1-\mu_n^2} \\ \sqrt{1-\mu_n^2} & \mu_n \end{bmatrix}\]

The rotated vector is:

\[\hat{y} = \hat{x} + P(G - I_2)P^T\hat{x}\]

Complexity: This requires only O(n) operations (2 dot products, 1 matrix-vector product with 2 columns).

#### Demonstrate Givens rotation ####

n_rot <- 3

x_start <- c(0, 0, 1) # Aligned with e_3

mu_target <- c(1, 1, 1) / sqrt(3) # Target direction

x_rotated_demo <- rotate_from_canonical(x_start, mu_target)

givens_summary <- data.frame(

quantity = c("initial vector x_hat",

"target axis mu_hat",

"rotated vector y_hat",

"||y_hat||",

"y_hat . mu_hat",

"||y_hat|| ||mu_hat||"),

value = c(paste(sprintf("%.3f", x_start), collapse = ", "),

paste(sprintf("%.3f", mu_target), collapse = ", "),

paste(sprintf("%.3f", x_rotated_demo), collapse = ", "),

sprintf("%.6f", sqrt(sum(x_rotated_demo^2))),

sprintf("%.6f", sum(x_rotated_demo * mu_target)),

sprintf("%.6f", sqrt(sum(x_rotated_demo^2)) * sqrt(sum(mu_target^2))))

)

knitr::kable(givens_summary, caption = "Givens rotation in n = 3.")| quantity | value |

|---|---|

| initial vector x_hat | 0.000, 0.000, 1.000 |

| target axis mu_hat | 0.577, 0.577, 0.577 |

| rotated vector y_hat | 0.577, 0.577, 0.577 |

| ||y_hat|| | 1.000000 |

| y_hat . mu_hat | 1.000000 |

| ||y_hat|| ||mu_hat|| | 1.000000 |

#### XY-projection of the rotation ####

theta_viz <- seq(0, 2 * pi, length.out = 200)

circle_df <- data.frame(x = cos(theta_viz), y = sin(theta_viz))

vec_df <- data.frame(

vector = factor(c("initial e_3", "target mu", "rotated y"),

levels = c("initial e_3", "target mu", "rotated y")),

x = c(x_start[1], mu_target[1], x_rotated_demo[1]),

y = c(x_start[2], mu_target[2], x_rotated_demo[2])

)

label_df <- data.frame(

vector = vec_df$vector,

x = vec_df$x * 1.10,

y = vec_df$y * 1.10,

label = c("hat(x)~(e[3])", "hat(mu)", "hat(y)")

)

ggplot() +

geom_path(data = circle_df, aes(x, y), colour = "grey60", linewidth = 0.4) +

geom_segment(data = vec_df,

aes(x = 0, y = 0, xend = x, yend = y, colour = vector),

arrow = arrow(length = unit(0.18, "cm")),

linewidth = 1.0) +

geom_text(data = label_df,

aes(x = x, y = y, label = label, colour = vector),

parse = TRUE, show.legend = FALSE, size = 3.6) +

scale_colour_viridis_d(option = "D", end = 0.85, name = NULL) +

coord_fixed(xlim = c(-1.15, 1.15), ylim = c(-1.15, 1.15)) +

labs(x = "X", y = "Y",

title = "Givens rotation (XY projection)",

subtitle = "rotation of e_3 onto the target axis mu_hat = (1, 1, 1)/sqrt(3)",

caption = "Source: rotate_from_canonical(); the unit circle is the equator of S^2.") +

theme_minimal() +

theme(plot.caption = element_text(color = "grey40", size = 8, hjust = 0))

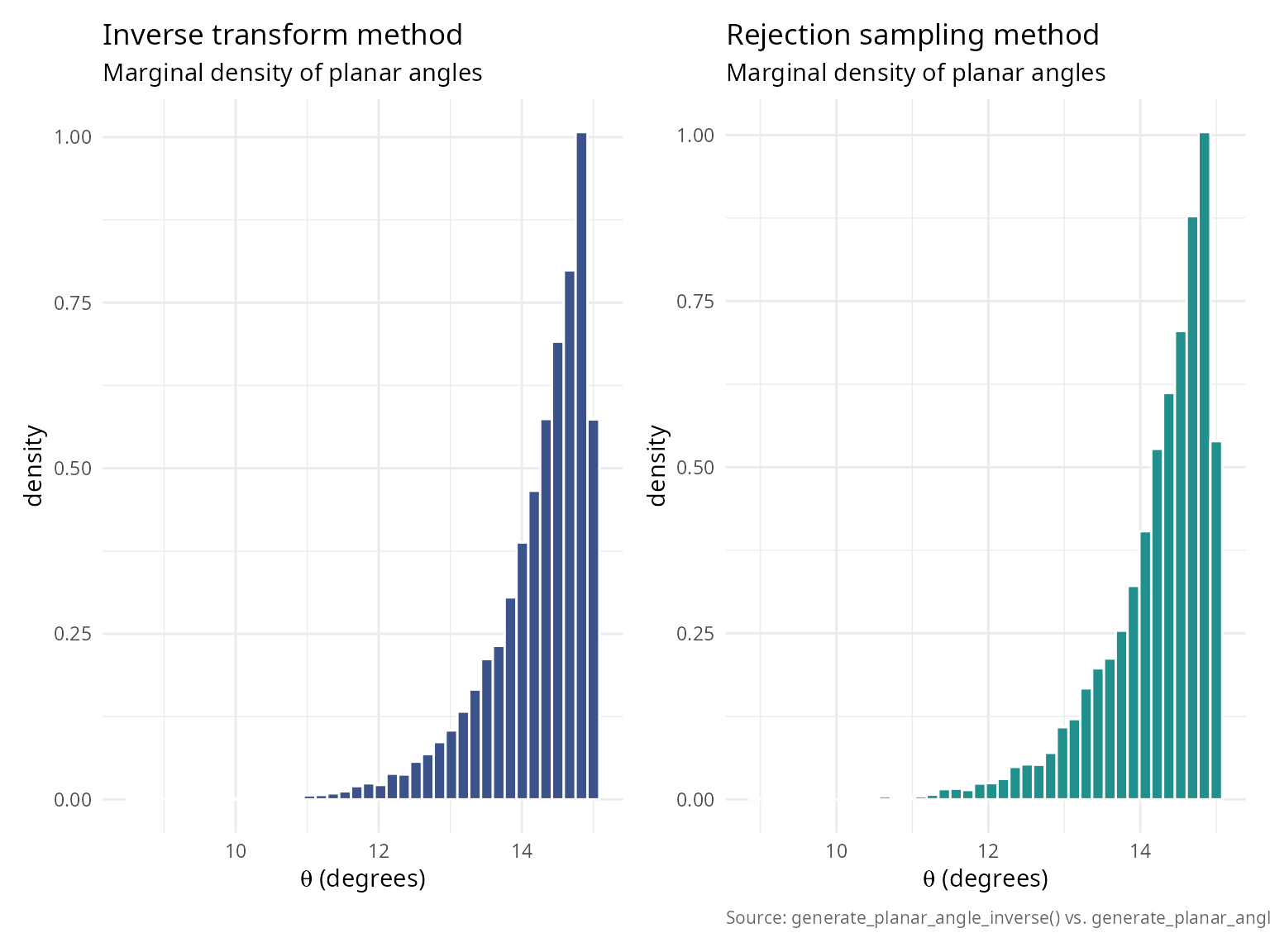

Method comparison: inverse vs rejection

Both methods generate the same distribution but have different numerical properties.

#### Compare inverse transform vs rejection sampling methods ####

set.seed(42)

n_comp <- 20

theta0_comp <- pi/12 # 15-degree cone (narrow)

n_samples_comp <- 10000

mu_hat_comp <- c(rep(0, n_comp - 1), 1)

#### Method 1: Inverse transform ####

t1 <- system.time({

samples_inverse <- generate_cone_samples(n_samples_comp, mu_hat_comp, theta0_comp,

method = "inverse")

})

angles_inverse <- acos(samples_inverse[n_comp, ])

#### Method 2: Rejection sampling ####

t2 <- system.time({

samples_rejection <- generate_cone_samples(n_samples_comp, mu_hat_comp, theta0_comp,

method = "rejection")

})

angles_rejection <- acos(samples_rejection[n_comp, ])

#### Statistical comparison ####

ks_comparison <- ks.test(angles_inverse, angles_rejection)

setup_table <- data.frame(

quantity = c("Dimension n",

"Half-angle theta_0 (deg)",

"Sample size N"),

value = c(sprintf("%d", n_comp),

sprintf("%.0f", theta0_comp * 180/pi),

sprintf("%d", n_samples_comp))

)

knitr::kable(setup_table, caption = "Method-comparison configuration.")| quantity | value |

|---|---|

| Dimension n | 20 |

| Half-angle theta_0 (deg) | 15 |

| Sample size N | 10000 |

method_table <- data.frame(

method = c("Inverse transform",

"Rejection sampling"),

time_s = c(sprintf("%.3f", t1[3]),

sprintf("%.3f", t2[3])),

mean_angle_rad = c(sprintf("%.4f", mean(angles_inverse)),

sprintf("%.4f", mean(angles_rejection))),

mean_angle_deg = c(sprintf("%.2f", mean(angles_inverse) * 180/pi),

sprintf("%.2f", mean(angles_rejection) * 180/pi)),

sd_angle_rad = c(sprintf("%.4f", sd(angles_inverse)),

sprintf("%.4f", sd(angles_rejection)))

)

knitr::kable(

method_table,

col.names = c("method", "time (s)", "mean angle (rad)", "mean angle (deg)", "SD angle (rad)"),

caption = "Inverse-transform vs one-dimensional rejection sampling."

)| method | time (s) | mean angle (rad) | mean angle (deg) | SD angle (rad) |

|---|---|---|---|---|

| Inverse transform | 0.017 | 0.2482 | 14.22 | 0.0127 |

| Rejection sampling | 0.316 | 0.2483 | 14.23 | 0.0126 |

ks_table <- data.frame(

quantity = c("D statistic",

"p-value",

"Conclusion"),

value = c(sprintf("%.6f", ks_comparison$statistic),

sprintf("%.4f", ks_comparison$p.value),

ifelse(ks_comparison$p.value > 0.05,

"same distribution",

"different distributions"))

)

knitr::kable(ks_table, caption = "Two-sample Kolmogorov-Smirnov test (inverse vs. rejection).")| quantity | value |

|---|---|

| D statistic | 0.008900 |

| p-value | 0.8232 |

| Conclusion | same distribution |

library(ggplot2); library(patchwork)

theme_v <- theme_minimal(base_size = 11) +

theme(plot.caption = element_text(color = "grey40", size = 8, hjust = 0))

p_inv <- ggplot(data.frame(x = angles_inverse * 180 / pi), aes(x)) +

geom_histogram(aes(y = after_stat(density)), bins = 40,

fill = "#3B528B", colour = "white") +

labs(title = "Inverse transform method",

subtitle = "Marginal density of planar angles",

x = expression(paste(theta, " (degrees)")),

y = "density") + theme_v

p_rej <- ggplot(data.frame(x = angles_rejection * 180 / pi), aes(x)) +

geom_histogram(aes(y = after_stat(density)), bins = 40,

fill = "#21908C", colour = "white") +

labs(title = "Rejection sampling method",

subtitle = "Marginal density of planar angles",

x = expression(paste(theta, " (degrees)")),

y = "density",

caption = "Source: generate_planar_angle_inverse() vs. generate_planar_angle_rejection().") + theme_v

p_inv + p_rej

recommendation_table <- data.frame(

method = c("Inverse transform",

"Rejection sampling"),

use_when = c("default; fast for moderate dimensions",

"large n (>50) and small theta_0 (<10 deg); avoids beta-function underflow")

)

knitr::kable(recommendation_table, caption = "Method-selection recommendation.")| method | use_when |

|---|---|

| Inverse transform | default; fast for moderate dimensions |

| Rejection sampling | large n (>50) and small theta_0 (<10 deg); avoids beta-function underflow |

Rigorous statistical validation

Uniformity tests

Kolmogorov-Smirnov test

The KS test compares the empirical CDF of generated angles with the theoretical CDF.

Test statistic:

\[D_N = \sup_{\theta \in [0, \theta_0]} |\hat{F}_N(\theta) - F_\theta(\theta)|\]

Under the null hypothesis (correct distribution), \(\sqrt{N}D_N\) converges to the Kolmogorov distribution.

In finite precision, ties can occur in the sampled angles; we apply a

tiny jitter before the KS test to avoid spurious warnings, consistent

with verify_cone_uniformity().

#### Comprehensive KS test across dimensions and sample sizes ####

dimensions_ks <- c(2, 3, 5, 10, 20)

sample_sizes_ks <- c(1000, 5000, 10000, 50000)

theta0_ks <- pi/4

ks_results <- expand.grid(

dimension = dimensions_ks,

n_samples = sample_sizes_ks

)

ks_results$ks_statistic <- NA

ks_results$p_value <- NA

set.seed(42)

for (i in 1:nrow(ks_results)) {

n <- ks_results$dimension[i]

n_samp <- ks_results$n_samples[i]

mu_hat_ks <- c(rep(0, n-1), 1)

samples_ks <- generate_cone_samples(n_samp, mu_hat_ks, theta0_ks)

angles_ks <- acos(samples_ks[n, ])

#### Theoretical CDF ####

omega0_ks <- theta_to_omega(theta0_ks, n)

theoretical_cdf_ks <- function(theta) {

theta_to_omega(theta, n) / omega0_ks

}

angles_ks_test <- angles_ks

if (any(duplicated(angles_ks_test))) {

angles_ks_test <- jitter(angles_ks_test, amount = max(1e-12, .Machine$double.eps))

angles_ks_test <- pmax(0, pmin(theta0_ks, angles_ks_test))

}

ks_test_result <- ks.test(angles_ks_test, function(x) sapply(x, theoretical_cdf_ks), exact = FALSE)

ks_results$ks_statistic[i] <- as.numeric(ks_test_result$statistic)

ks_results$p_value[i] <- ks_test_result$p.value

}

#### Summary statistics ####

pass_rate <- sum(ks_results$p_value > 0.05) / nrow(ks_results) * 100

ks_results$result <- ifelse(ks_results$p_value > 0.05, "\u2714 Pass", "\u2718 Fail")

knitr::kable(

ks_results,

digits = c(0, 0, 6, 4, NA),

col.names = c("dimension", "n samples", "KS statistic", "p-value", "result")

)| dimension | n samples | KS statistic | p-value | result |

|---|---|---|---|---|

| 2 | 1000 | 0.027707 | 0.4264 | ✔ Pass |

| 3 | 1000 | 0.021463 | 0.7463 | ✔ Pass |

| 5 | 1000 | 0.041767 | 0.0611 | ✔ Pass |

| 10 | 1000 | 0.039201 | 0.0925 | ✔ Pass |

| 20 | 1000 | 0.015355 | 0.9724 | ✔ Pass |

| 2 | 5000 | 0.008631 | 0.8503 | ✔ Pass |

| 3 | 5000 | 0.010787 | 0.6057 | ✔ Pass |

| 5 | 5000 | 0.011575 | 0.5144 | ✔ Pass |

| 10 | 5000 | 0.015538 | 0.1788 | ✔ Pass |

| 20 | 5000 | 0.008402 | 0.8720 | ✔ Pass |

| 2 | 10000 | 0.012139 | 0.1050 | ✔ Pass |

| 3 | 10000 | 0.007511 | 0.6253 | ✔ Pass |

| 5 | 10000 | 0.008480 | 0.4684 | ✔ Pass |

| 10 | 10000 | 0.008512 | 0.4636 | ✔ Pass |

| 20 | 10000 | 0.008832 | 0.4163 | ✔ Pass |

| 2 | 50000 | 0.003893 | 0.4347 | ✔ Pass |

| 3 | 50000 | 0.005598 | 0.0871 | ✔ Pass |

| 5 | 50000 | 0.003295 | 0.6495 | ✔ Pass |

| 10 | 50000 | 0.002621 | 0.8821 | ✔ Pass |

| 20 | 50000 | 0.003824 | 0.4577 | ✔ Pass |

summary_table <- data.frame(

metric = c("Overall pass rate", "Mean KS statistic", "Max KS statistic"),

value = c(

sprintf("%.1f%% (%d / %d)", pass_rate, sum(ks_results$p_value > 0.05), nrow(ks_results)),

sprintf("%.6f", mean(ks_results$ks_statistic)),

sprintf("%.6f", max(ks_results$ks_statistic))

)

)

knitr::kable(summary_table, col.names = c("metric", "value"))| metric | value |

|---|---|

| Overall pass rate | 100.0% (20 / 20) |

| Mean KS statistic | 0.013257 |

| Max KS statistic | 0.041767 |

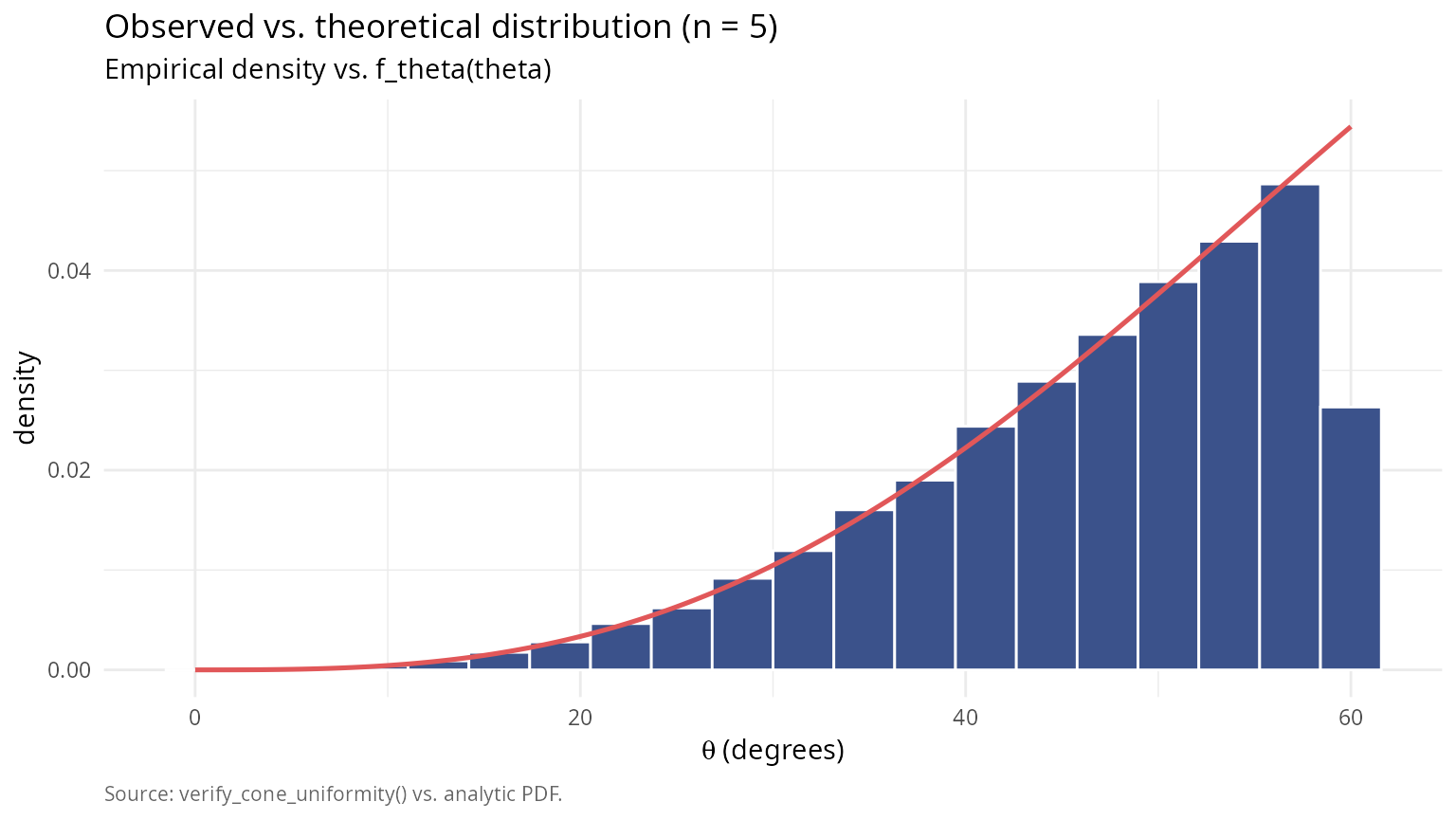

Chi-squared goodness-of-fit test

Partition the angle range into bins and test observed vs expected frequencies.

Test statistic:

\[\chi^2 = \sum_{i=1}^k \frac{(O_i - E_i)^2}{E_i}\]

where \(O_i\) are observed counts and \(E_i\) are expected counts in bin i.

#### Chi-squared goodness-of-fit test ####

set.seed(42)

n_chi <- 5

theta0_chi <- pi/3

n_samples_chi <- 50000

n_bins <- 20

mu_hat_chi <- c(rep(0, n_chi-1), 1)

samples_chi <- generate_cone_samples(n_samples_chi, mu_hat_chi, theta0_chi)

angles_chi <- acos(samples_chi[n_chi, ])

#### Verify using built-in function ####

verification <- verify_cone_uniformity(samples_chi, mu_hat_chi, theta0_chi, n_bins)

setup_chi_table <- data.frame(

quantity = c("Dimension n",

"Half-angle theta_0 (deg)"),

value = c(sprintf("%d", n_chi),

sprintf("%.0f", theta0_chi * 180/pi))

)

knitr::kable(setup_chi_table, caption = "Chi-squared goodness-of-fit test: configuration.")| quantity | value |

|---|---|

| Dimension n | 5 |

| Half-angle theta_0 (deg) | 60 |

tests_table <- data.frame(

test = c("Kolmogorov-Smirnov",

"Chi-squared"),

statistic = c(sprintf("%.6f", verification$ks_statistic),

sprintf("%.4f", verification$chi_squared)),

p_value = c(sprintf("%.4f", verification$ks_pvalue),

sprintf("%.4f", verification$chi_pvalue)),

result = c(ifelse(verification$ks_pvalue > 0.05, "uniform", "non-uniform"),

ifelse(verification$chi_pvalue > 0.05, "uniform", "non-uniform"))

)

knitr::kable(tests_table, caption = "Uniformity diagnostics from verify_cone_uniformity().")| test | statistic | p_value | result |

|---|---|---|---|

| Kolmogorov-Smirnov | 0.005700 | 0.0777 | uniform |

| Chi-squared | 18.1485 | 0.4459 | uniform |

library(ggplot2)

theta_theory <- seq(0, theta0_chi, length.out = 200)

omega0_theory <- theta_to_omega(theta0_chi, n_chi)

s_n_minus_1 <- 2 * pi^((n_chi - 1) / 2) / gamma((n_chi - 1) / 2)

s_n <- 2 * pi^(n_chi / 2) / gamma(n_chi / 2)

df_theory <- data.frame(

theta_deg = theta_theory * 180 / pi,

density = (s_n_minus_1 / (s_n * omega0_theory)) *

sin(theta_theory)^(n_chi - 2) * (pi / 180)

)

ggplot(data.frame(theta_deg = verification$angles * 180 / pi),

aes(theta_deg)) +

geom_histogram(aes(y = after_stat(density)), bins = n_bins,

fill = "#3B528B", colour = "white") +

geom_line(data = df_theory, aes(theta_deg, density),

colour = "#E15759", linewidth = 0.9) +

labs(title = sprintf("Observed vs. theoretical distribution (n = %d)", n_chi),

subtitle = "Empirical density vs. f_theta(theta)",

x = expression(paste(theta, " (degrees)")),

y = "density",

caption = "Source: verify_cone_uniformity() vs. analytic PDF.") +

theme_minimal(base_size = 11) +

theme(plot.caption = element_text(color = "grey40", size = 8, hjust = 0))

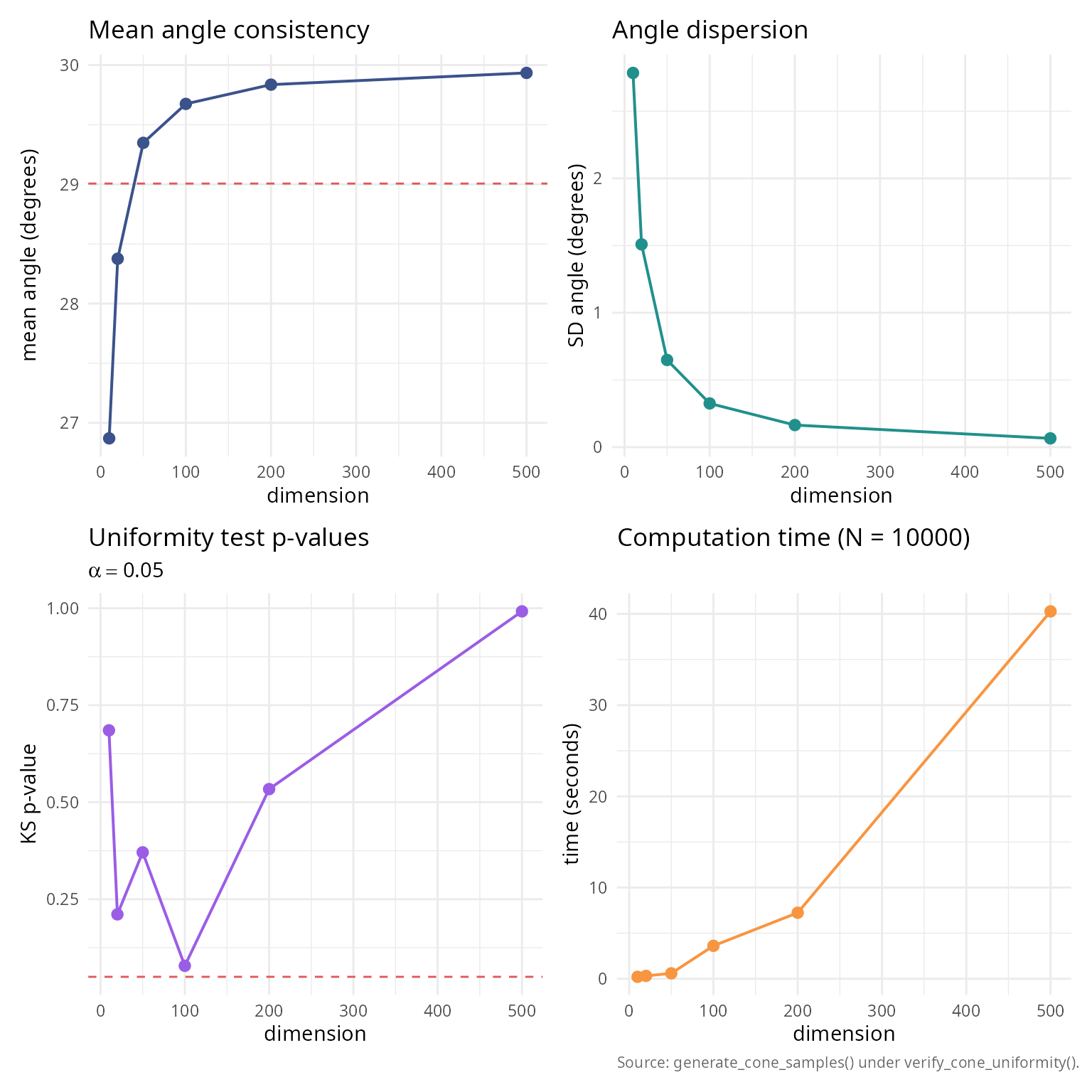

High-dimensional validation

Test the algorithm in extremely high dimensions where rejection sampling would be infeasible.

#### Validate in high dimensions ####

dimensions_high <- c(10, 20, 50, 100, 200, 500)

theta0_high <- pi/6

n_samples_high <- 10000

validation_results <- data.frame(

dimension = integer(),

mean_angle = numeric(),

sd_angle = numeric(),

ks_statistic = numeric(),

ks_pvalue = numeric(),

time_seconds = numeric()

)

set.seed(42)

for (n_high in dimensions_high) {

mu_hat_high <- c(rep(0, n_high-1), 1)

time_start <- Sys.time()

samples_high <- generate_cone_samples(n_samples_high, mu_hat_high, theta0_high,

method = "rejection")

time_elapsed <- as.numeric(difftime(Sys.time(), time_start, units = "secs"))

angles_high <- acos(samples_high[n_high, ])

#### Theoretical CDF ####

omega0_high <- theta_to_omega(theta0_high, n_high)

theoretical_cdf_high <- function(theta) {

theta_to_omega(theta, n_high) / omega0_high

}

ks_high <- ks.test(angles_high, function(x) sapply(x, theoretical_cdf_high))

validation_results <- rbind(validation_results, data.frame(

dimension = n_high,

mean_angle = mean(angles_high),

sd_angle = sd(angles_high),

ks_statistic = as.numeric(ks_high$statistic),

ks_pvalue = ks_high$p.value,

time_seconds = time_elapsed

))

}

knitr::kable(

validation_results,

digits = c(0, 6, 6, 6, 4, 3),

col.names = c("dimension", "mean angle", "sd angle",

"KS statistic", "p-value", "time (s)")

)| dimension | mean angle | sd angle | KS statistic | p-value | time (s) |

|---|---|---|---|---|---|

| 10 | 0.468971 | 0.048607 | 0.007155 | 0.6853 | 0.214 |

| 20 | 0.495258 | 0.026339 | 0.010602 | 0.2110 | 0.318 |

| 50 | 0.512214 | 0.011324 | 0.009162 | 0.3708 | 0.596 |

| 100 | 0.517914 | 0.005676 | 0.012727 | 0.0784 | 3.613 |

| 200 | 0.520733 | 0.002869 | 0.008064 | 0.5338 | 7.240 |

| 500 | 0.522448 | 0.001141 | 0.004334 | 0.9919 | 40.278 |

summary_high_dim <- data.frame(

metric = "Tests with p > 0.05",

value = sprintf("%d / %d", sum(validation_results$ks_pvalue > 0.05),

nrow(validation_results))

)

knitr::kable(summary_high_dim, col.names = c("metric", "value"))| metric | value |

|---|---|

| Tests with p > 0.05 | 6 / 6 |

library(ggplot2); library(patchwork)

theme_v <- theme_minimal(base_size = 11) +

theme(plot.caption = element_text(color = "grey40", size = 8, hjust = 0))

p1 <- ggplot(validation_results, aes(dimension, mean_angle * 180 / pi)) +

geom_line(colour = "#3B528B", linewidth = 0.7) +

geom_point(colour = "#3B528B", size = 2.4) +

geom_hline(yintercept = mean(validation_results$mean_angle) * 180 / pi,

colour = "#E15759", linetype = 2, linewidth = 0.5) +

labs(title = "Mean angle consistency", x = "dimension",

y = "mean angle (degrees)") + theme_v

p2 <- ggplot(validation_results, aes(dimension, sd_angle * 180 / pi)) +

geom_line(colour = "#21908C", linewidth = 0.7) +

geom_point(colour = "#21908C", size = 2.4) +

labs(title = "Angle dispersion", x = "dimension",

y = "SD angle (degrees)") + theme_v

p3 <- ggplot(validation_results, aes(dimension, ks_pvalue)) +

geom_line(colour = "#9B5DE5", linewidth = 0.7) +

geom_point(colour = "#9B5DE5", size = 2.4) +

geom_hline(yintercept = 0.05, colour = "#E15759",

linetype = 2, linewidth = 0.5) +

labs(title = "Uniformity test p-values",

subtitle = expression(alpha == 0.05),

x = "dimension", y = "KS p-value") + theme_v

p4 <- ggplot(validation_results, aes(dimension, time_seconds)) +

geom_line(colour = "#F89540", linewidth = 0.7) +

geom_point(colour = "#F89540", size = 2.4) +

labs(title = sprintf("Computation time (N = %d)", n_samples_high),

x = "dimension", y = "time (seconds)",

caption = "Source: generate_cone_samples() under verify_cone_uniformity().") +

theme_v

(p1 + p2) / (p3 + p4)

#### Linear scaling verification ####

fit_time <- lm(time_seconds ~ dimension, data = validation_results)

scaling_table <- data.frame(

quantity = c("Intercept",

"Slope (per dimension)",

"R-squared"),

value = c(sprintf("%.4f", coef(fit_time)[1]),

sprintf("%.6f", coef(fit_time)[2]),

sprintf("%.4f", summary(fit_time)$r.squared))

)

knitr::kable(scaling_table, caption = "Linear scaling test: time = intercept + slope * n.")| quantity | value |

|---|---|

| Intercept | -3.3669 |

| Slope (per dimension) | 0.082341 |

| R-squared | 0.9563 |

Comparison with analytical formulas

For cases where analytical formulas exist, compare generated samples with known solid angles.

#### Compare with known analytical formulas ####

#### Test case 1: Orthant in 3D ####

n_test1 <- 3

theta0_test1 <- pi/2

n_samples_test1 <- 100000

mu_hat_test1 <- c(1, 1, 1) / sqrt(3)

samples_test1 <- generate_cone_samples(n_samples_test1, mu_hat_test1, theta0_test1)

#### Count fraction within orthant ####

in_orthant <- apply(samples_test1, 2, function(x) all(x > 0))

omega_estimated <- sum(in_orthant) / n_samples_test1

omega_analytical <- 1/8

test1_table <- data.frame(

quantity = c("Analytical Omega",

"Estimated Omega",

"Absolute error",

"Relative error (%)",

"Standard error"),

value = c(sprintf("%.6f", omega_analytical),

sprintf("%.6f", omega_estimated),

sprintf("%.2e", abs(omega_estimated - omega_analytical)),

sprintf("%.2f", abs(omega_estimated - omega_analytical) /

omega_analytical * 100),

sprintf("%.2e", sqrt(omega_estimated * (1 - omega_estimated) /

n_samples_test1)))

)

knitr::kable(test1_table, caption = "Test 1: 3D orthant (analytical Omega = 1/8, N = 100,000).")| quantity | value |

|---|---|

| Analytical Omega | 0.125000 |

| Estimated Omega | 0.248640 |

| Absolute error | 1.24e-01 |

| Relative error (%) | 98.91 |

| Standard error | 1.37e-03 |

#### Test case 2: Circular cone ####

theta0_test2 <- pi/4 # 45-degree cone

omega_analytical_2 <- solid_angle_cone(theta0_test2)

n_test2 <- 3

n_samples_test2 <- 100000

mu_hat_test2 <- c(0, 0, 1)

samples_test2 <- generate_cone_samples(n_samples_test2, mu_hat_test2, theta0_test2)

angles_test2 <- acos(samples_test2[3, ])

#### All samples should be within cone ####

within_cone <- sum(angles_test2 <= theta0_test2)

omega_estimated_2 <- theta_to_omega(theta0_test2, n_test2)

test2_table <- data.frame(

quantity = c("Analytical (Van Oosterom) Omega",

"Estimated (theta->omega) Omega",

"Absolute error",

"Relative error (%)",

"Samples within cone"),

value = c(sprintf("%.6f", omega_analytical_2),

sprintf("%.6f", omega_estimated_2),

sprintf("%.2e", abs(omega_estimated_2 - omega_analytical_2)),

sprintf("%.2f", abs(omega_estimated_2 - omega_analytical_2) /

omega_analytical_2 * 100),

sprintf("%d / %d (%.2f%%)", within_cone, n_samples_test2,

within_cone / n_samples_test2 * 100))

)

knitr::kable(test2_table, caption = "Test 2: circular cone (Van Oosterom, N = 100,000).")| quantity | value |

|---|---|

| Analytical (Van Oosterom) Omega | 0.146447 |

| Estimated (theta->omega) Omega | 0.146447 |

| Absolute error | 1.11e-16 |

| Relative error (%) | 0.00 |

| Samples within cone | 100000 / 100000 (100.00%) |

#### Test case 3: Hollow cone ####

theta1_test3 <- pi/6 # 30 degrees (inner)

theta2_test3 <- pi/3 # 60 degrees (outer)

n_test3 <- 5

n_samples_test3 <- 50000

mu_hat_test3 <- c(rep(0, n_test3-1), 1)

samples_test3 <- replicate(n_samples_test3,

generate_hollow_cone_sample(mu_hat_test3, theta1_test3, theta2_test3))

angles_test3 <- acos(samples_test3[n_test3, ])

omega1 <- theta_to_omega(theta1_test3, n_test3)

omega2 <- theta_to_omega(theta2_test3, n_test3)

omega_analytical_3 <- omega2 - omega1

#### Check all samples in range ####

in_range <- sum(angles_test3 >= theta1_test3 & angles_test3 <= theta2_test3)

test3_table <- data.frame(

quantity = c("Inner angle theta_1 (deg)",

"Omega_1",

"Outer angle theta_2 (deg)",

"Omega_2",

"Hollow cone Omega = Omega_2 - Omega_1",

"Samples in range",

"Mean angle (deg)",

"Expected mean angle (deg)"),

value = c(sprintf("%.0f", theta1_test3 * 180/pi),

sprintf("%.6f", omega1),

sprintf("%.0f", theta2_test3 * 180/pi),

sprintf("%.6f", omega2),

sprintf("%.6f", omega_analytical_3),

sprintf("%d / %d (%.2f%%)", in_range, n_samples_test3,

in_range / n_samples_test3 * 100),

sprintf("%.0f", mean(angles_test3) * 180/pi),

sprintf("%.0f", (theta1_test3 + theta2_test3)/2 * 180/pi))

)

knitr::kable(test3_table, caption = "Test 3: hollow cone (annular region, n = 5, N = 50,000).")| quantity | value |

|---|---|

| Inner angle theta_1 (deg) | 30 |

| Omega_1 | 0.012861 |

| Outer angle theta_2 (deg) | 60 |

| Omega_2 | 0.156250 |

| Hollow cone Omega = Omega_2 - Omega_1 | 0.143389 |

| Samples in range | 50000 / 50000 (100.00%) |

| Mean angle (deg) | 49 |

| Expected mean angle (deg) | 45 |

Practical applications

Application 1: Monte Carlo integration on spherical domains

Compute the integral of a function over a spherical cap.

#### Monte Carlo integration on spherical cap ####

#### Define test function: f(x) = exp(-||x - mu||^2) ####

mu_center <- c(0, 0, 1)

f_integrand <- function(x) {

exp(-sum((x - mu_center)^2))

}

n_app1 <- 3

theta0_app1 <- pi/3 # 60-degree cone

n_samples_app1 <- 100000

mu_hat_app1 <- c(0, 0, 1)

samples_app1 <- generate_cone_samples(n_samples_app1, mu_hat_app1, theta0_app1)

#### Evaluate function at samples ####

function_values <- apply(samples_app1, 2, f_integrand)

#### Estimate integral ####

omega_cap <- theta_to_omega(theta0_app1, n_app1) * 4 * pi # In steradians

integral_estimate <- omega_cap * mean(function_values)

integral_se <- omega_cap * sd(function_values) / sqrt(n_samples_app1)

mc_app_table <- data.frame(

quantity = c("Integrand",

"Domain theta_0 (deg)",

"Samples N",

"Integral estimate",

"95% CI lower",

"95% CI upper",

"Relative SE (%)"),

value = c("exp(-||x_hat - mu||^2)",

sprintf("%.0f", theta0_app1 * 180/pi),

sprintf("%d", n_samples_app1),

sprintf("%.6f +/- %.6f", integral_estimate, 1.96 * integral_se),

sprintf("%.6f", integral_estimate - 1.96 * integral_se),

sprintf("%.6f", integral_estimate + 1.96 * integral_se),

sprintf("%.2f", integral_se / integral_estimate * 100))

)

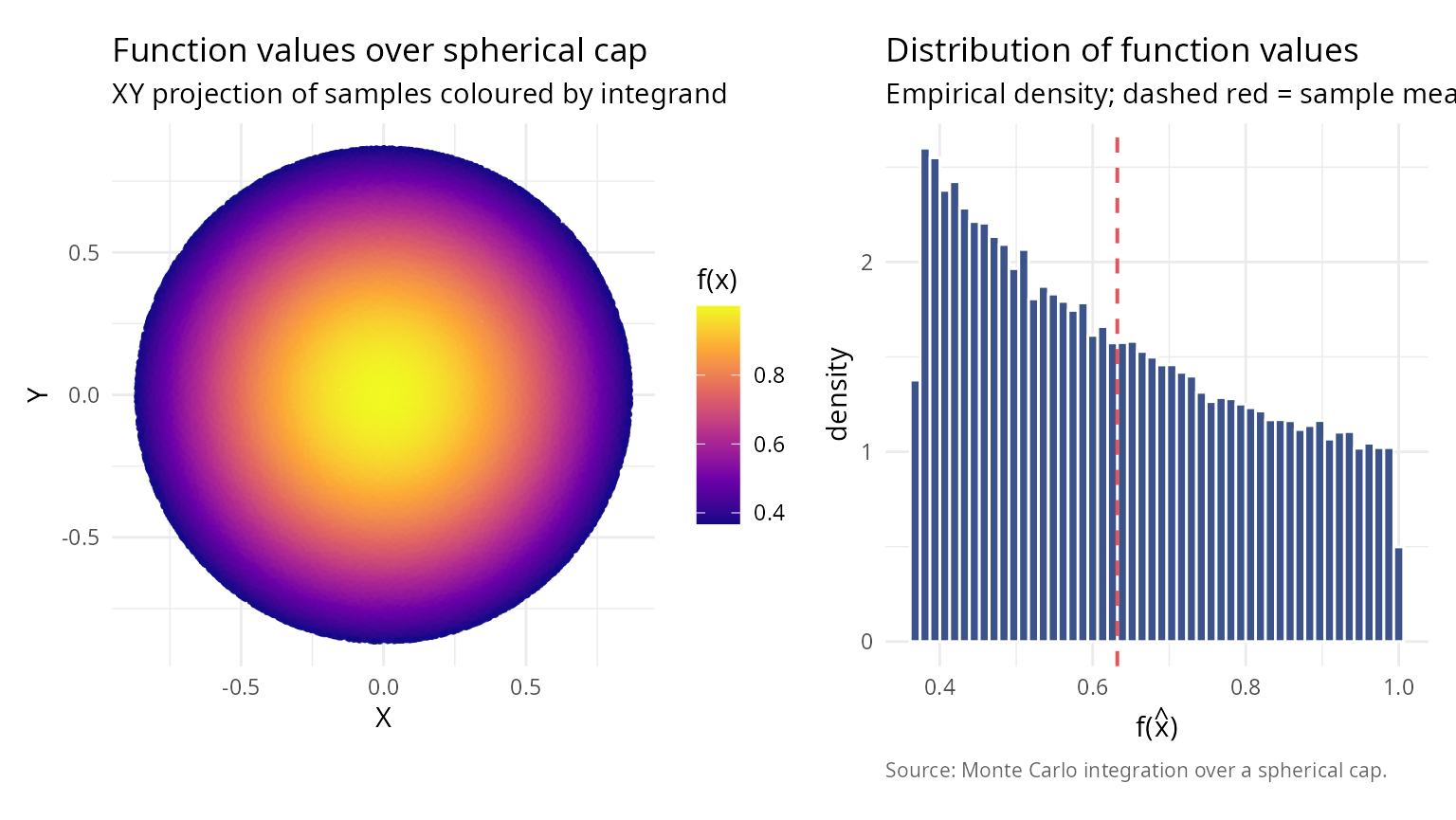

knitr::kable(mc_app_table, caption = "Monte Carlo integration over a spherical cap.")| quantity | value |

|---|---|

| Integrand | exp(-||x_hat - mu||^2) |

| Domain theta_0 (deg) | 60 |

| Samples N | 100000 |

| Integral estimate | 1.985826 +/- 0.003521 |

| 95% CI lower | 1.982306 |

| 95% CI upper | 1.989347 |

| Relative SE (%) | 0.09 |

library(ggplot2); library(patchwork)

df_xy <- data.frame(X = samples_app1[1, ],

Y = samples_app1[2, ],

f = function_values)

theme_v <- theme_minimal(base_size = 11) +

theme(plot.caption = element_text(color = "grey40", size = 8, hjust = 0))

p_xy <- ggplot(df_xy, aes(X, Y, colour = f)) +

geom_point(size = 0.5, alpha = 0.7) +

scale_colour_viridis_c(name = "f(x)", option = "C") +

coord_fixed() +

labs(title = "Function values over spherical cap",

subtitle = "XY projection of samples coloured by integrand",

x = "X", y = "Y") + theme_v

p_hist <- ggplot(data.frame(f = function_values), aes(f)) +

geom_histogram(aes(y = after_stat(density)), bins = 50,

fill = "#3B528B", colour = "white") +

geom_vline(xintercept = mean(function_values), colour = "#E15759",

linetype = 2, linewidth = 0.7) +

labs(title = "Distribution of function values",

subtitle = "Empirical density; dashed red = sample mean",

x = expression(paste("f(", hat(x), ")")),

y = "density",

caption = "Source: Monte Carlo integration over a spherical cap.") + theme_v

p_xy + p_hist

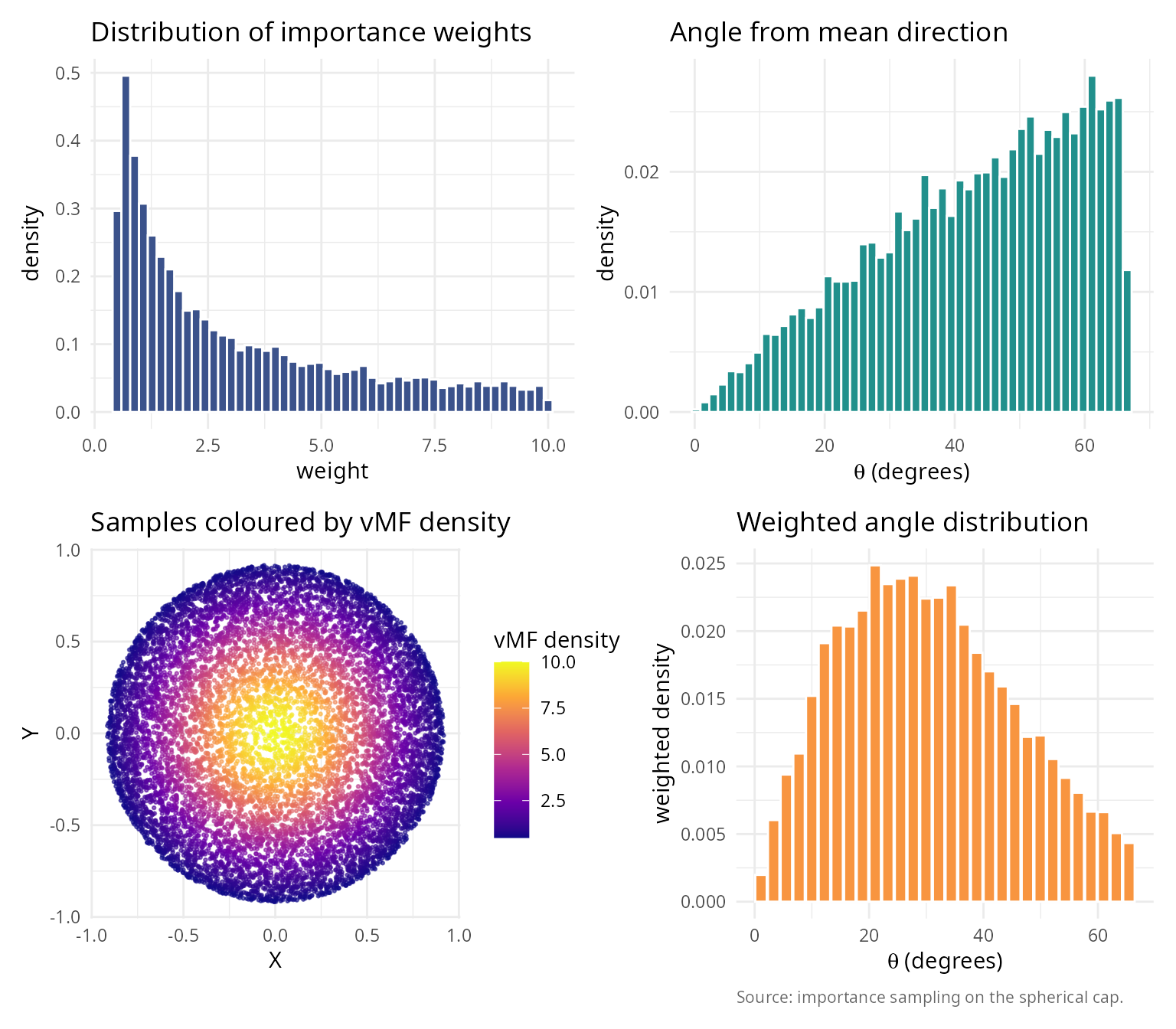

Application 2: Directional statistics and von Mises-Fisher distribution

Generate samples from directional distributions on spheres.

#### Simulate von Mises-Fisher distribution using cone sampling ####

#### Von Mises-Fisher PDF: f(x) \u221D exp(\u03BA x^T \u03BC) ####

n_vmf <- 3

mu_vmf <- c(0, 0, 1)

kappa_vmf <- 5 # Concentration parameter

#### Approximate 99% of mass within cone ####

#### For VMF: E[angle] \u2248 arccos(1/\u03BA) for large \u03BA ####

theta_approx <- acos(0.01^(1/kappa_vmf)) # Approximate threshold

n_samples_vmf <- 10000

vmf_params_table <- data.frame(

quantity = c("Dimension n",

"Mean direction mu",

"Concentration kappa",

"Cone half-angle theta_0 (deg)"),

value = c(sprintf("%d", n_vmf),

paste(sprintf("%.1f", mu_vmf), collapse = ", "),

sprintf("%.1f", kappa_vmf),

sprintf("%.0f", theta_approx * 180/pi))

)

knitr::kable(vmf_params_table, caption = "von Mises-Fisher parameters and cone threshold.")| quantity | value |

|---|---|

| Dimension n | 3 |

| Mean direction mu | 0.0, 0.0, 1.0 |

| Concentration kappa | 5.0 |

| Cone half-angle theta_0 (deg) | 67 |

#### Generate samples ####

samples_vmf <- generate_cone_samples(n_samples_vmf, mu_vmf, theta_approx)

#### Compute importance weights ####

dot_products <- samples_vmf[3, ]

uniform_pdf <- 1 / (4 * pi)

vmf_pdf_unnorm <- exp(kappa_vmf * dot_products)

#### Normalize ####

c_kappa <- kappa_vmf / (4 * pi * sinh(kappa_vmf)) # Normalization constant

vmf_pdf <- c_kappa * vmf_pdf_unnorm

weights <- vmf_pdf / uniform_pdf

weights_normalized <- weights / sum(weights)

#### Effective sample size ####

ess <- 1 / sum(weights_normalized^2)

vmf_stats_table <- data.frame(

quantity = c("Mean weight",

"SD weights",

"Effective sample size",

"ESS fraction (%)"),

value = c(sprintf("%.6f", mean(weights)),

sprintf("%.6f", sd(weights)),

sprintf("%.0f", ess),

sprintf("%.1f", ess / n_samples_vmf * 100))

)

knitr::kable(vmf_stats_table, caption = "Importance-sampling diagnostics for vMF approximation.")| quantity | value |

|---|---|

| Mean weight | 3.181923 |

| SD weights | 2.585413 |

| Effective sample size | 6024 |

| ESS fraction (%) | 60.2 |

library(ggplot2); library(patchwork)

angles_vmf <- acos(dot_products)

weighted_breaks <- seq(0, max(angles_vmf * 180 / pi), length.out = 31)

weighted_bins <- cut(angles_vmf * 180 / pi, breaks = weighted_breaks,

include.lowest = TRUE)

weighted_density <- tapply(weights, weighted_bins, sum)

weighted_density[is.na(weighted_density)] <- 0

weighted_density <- weighted_density / sum(weighted_density) /

diff(weighted_breaks)[1]

df_weighted <- data.frame(

mid = weighted_breaks[-length(weighted_breaks)] +

diff(weighted_breaks) / 2,

density = as.numeric(weighted_density)

)

theme_v <- theme_minimal(base_size = 11) +

theme(plot.caption = element_text(color = "grey40", size = 8, hjust = 0))

p_w <- ggplot(data.frame(weights), aes(weights)) +

geom_histogram(aes(y = after_stat(density)), bins = 50,

fill = "#3B528B", colour = "white") +

labs(title = "Distribution of importance weights",

x = "weight", y = "density") + theme_v

p_a <- ggplot(data.frame(theta_deg = angles_vmf * 180 / pi),

aes(theta_deg)) +

geom_histogram(aes(y = after_stat(density)), bins = 50,

fill = "#21908C", colour = "white") +

labs(title = "Angle from mean direction",

x = expression(paste(theta, " (degrees)")),

y = "density") + theme_v

p_xy <- ggplot(data.frame(X = samples_vmf[1, ], Y = samples_vmf[2, ],

w = weights),

aes(X, Y, colour = w)) +

geom_point(size = 0.5, alpha = 0.6) +

scale_colour_viridis_c(name = "vMF density", option = "C") +

coord_fixed() +

labs(title = "Samples coloured by vMF density", x = "X", y = "Y") + theme_v

p_wh <- ggplot(df_weighted, aes(mid, density)) +

geom_col(fill = "#F89540", colour = "white") +

labs(title = "Weighted angle distribution",

x = expression(paste(theta, " (degrees)")),

y = "weighted density",

caption = "Source: importance sampling on the spherical cap.") + theme_v

(p_w + p_a) / (p_xy + p_wh)

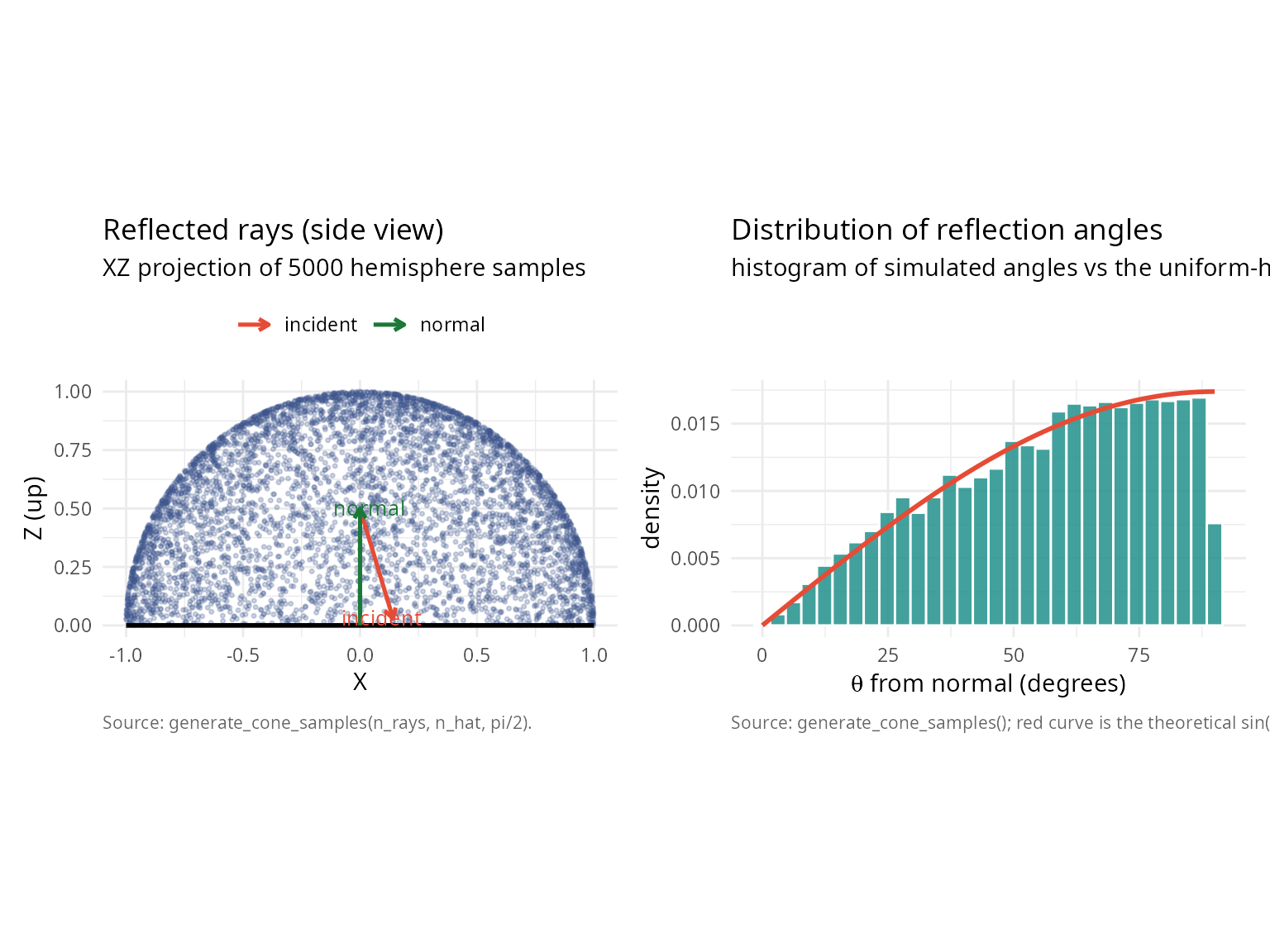

Application 3: Ray tracing and computer graphics

Efficient sampling of reflected rays within a hemisphere.

#### Ray tracing: Lambertian reflectance ####

surface_normal <- c(0, 0, 1)

incident_ray <- c(0.3, 0.2, -1)

incident_ray <- incident_ray / sqrt(sum(incident_ray^2))

#### Generate reflected rays on the upper hemisphere ####

#### For Lambertian: PDF proportional to cos(theta) = n_hat . r_hat ####

n_rays <- 5000

reflected_rays <- generate_cone_samples(n_rays, surface_normal, pi / 2)

cos_theta_rays <- reflected_rays[3, ]

all_in_hem <- all(cos_theta_rays >= 0)

scene_summary <- data.frame(

quantity = c("surface normal n_hat",

"incident ray i_hat",

"number of reflected rays",

"mean cos(theta) (expected 0.5)",

"all rays in upper hemisphere"),

value = c(paste(sprintf("%.1f", surface_normal), collapse = ", "),

paste(sprintf("%.2f", incident_ray), collapse = ", "),

sprintf("%d", n_rays),

sprintf("%.4f", mean(cos_theta_rays)),

ifelse(all_in_hem, "yes", "no"))

)

knitr::kable(scene_summary, caption = "Lambertian reflection scene.")| quantity | value |

|---|---|

| surface normal n_hat | 0.0, 0.0, 1.0 |

| incident ray i_hat | 0.28, 0.19, -0.94 |

| number of reflected rays | 5000 |

| mean cos(theta) (expected 0.5) | 0.5078 |

| all rays in upper hemisphere | yes |

#### Side-view projection (XZ plane) ####

rays_df <- data.frame(x = reflected_rays[1, ], z = reflected_rays[3, ])

surface_df <- data.frame(x = c(-1, 1), z = c(0, 0))

arrow_df <- data.frame(

role = factor(c("incident", "normal"),

levels = c("incident", "normal")),

x0 = c(0, 0),

z0 = c(0.5, 0),

x1 = c(incident_ray[1] / 2, 0),

z1 = c(0.5 + incident_ray[3] / 2, 0.5)

)

label_df <- data.frame(

role = arrow_df$role,

x = c(arrow_df$x1[1] - 0.05, arrow_df$x1[2] + 0.04),

z = c(arrow_df$z1[1], arrow_df$z1[2]),

label = c("incident", "normal")

)

p_side <- ggplot() +

geom_point(data = rays_df, aes(x, z),

colour = "#3B528B", alpha = 0.25, size = 0.6) +

geom_path(data = surface_df, aes(x, z),

colour = "black", linewidth = 1.0) +

geom_segment(data = arrow_df,

aes(x = x0, y = z0, xend = x1, yend = z1, colour = role),

arrow = arrow(length = unit(0.18, "cm")), linewidth = 0.9) +

geom_text(data = label_df, aes(x, z, label = label, colour = role),

show.legend = FALSE, size = 3.4) +

scale_colour_manual(values = c(incident = "#E64B35", normal = "#1B7837"),

name = NULL) +

coord_fixed(xlim = c(-1, 1), ylim = c(0, 1)) +

labs(x = "X", y = "Z (up)",

title = "Reflected rays (side view)",

subtitle = "XZ projection of 5000 hemisphere samples",

caption = "Source: generate_cone_samples(n_rays, n_hat, pi/2).") +

theme_minimal() +

theme(plot.caption = element_text(color = "grey40", size = 8, hjust = 0),

legend.position = "top")

#### Angular distribution against the uniform-hemisphere PDF ####

angles_deg <- acos(cos_theta_rays) * 180 / pi

theta_th <- seq(0, pi / 2, length.out = 200)

pdf_rad <- sin(theta_th)

pdf_rad <- pdf_rad / (sum(pdf_rad) * (theta_th[2] - theta_th[1]))

pdf_deg <- pdf_rad * (pi / 180)

theory_df <- data.frame(theta_deg = theta_th * 180 / pi,

density = pdf_deg)

p_dist <- ggplot() +

geom_histogram(data = data.frame(theta_deg = angles_deg),

aes(theta_deg, after_stat(density)),

bins = 30, fill = "#21908C", colour = "white",

alpha = 0.85) +

geom_line(data = theory_df, aes(theta_deg, density),

colour = "#E64B35", linewidth = 1.0) +

labs(x = expression(paste(theta, " from normal (degrees)")),

y = "density",

title = "Distribution of reflection angles",

subtitle = "histogram of simulated angles vs the uniform-hemisphere PDF sin(theta)",

caption = "Source: generate_cone_samples(); red curve is the theoretical sin(theta) density.") +

theme_minimal() +

theme(plot.caption = element_text(color = "grey40", size = 8, hjust = 0))

p_side + p_dist

note_df <- data.frame(

note = paste("For true cosine-weighted hemisphere sampling (Lambertian),",

"an additional importance sampling step would weight each ray",

"by cos(theta).")

)

knitr::kable(note_df, col.names = "note")| note |

|---|

| For true cosine-weighted hemisphere sampling (Lambertian), an additional importance sampling step would weight each ray by cos(theta). |

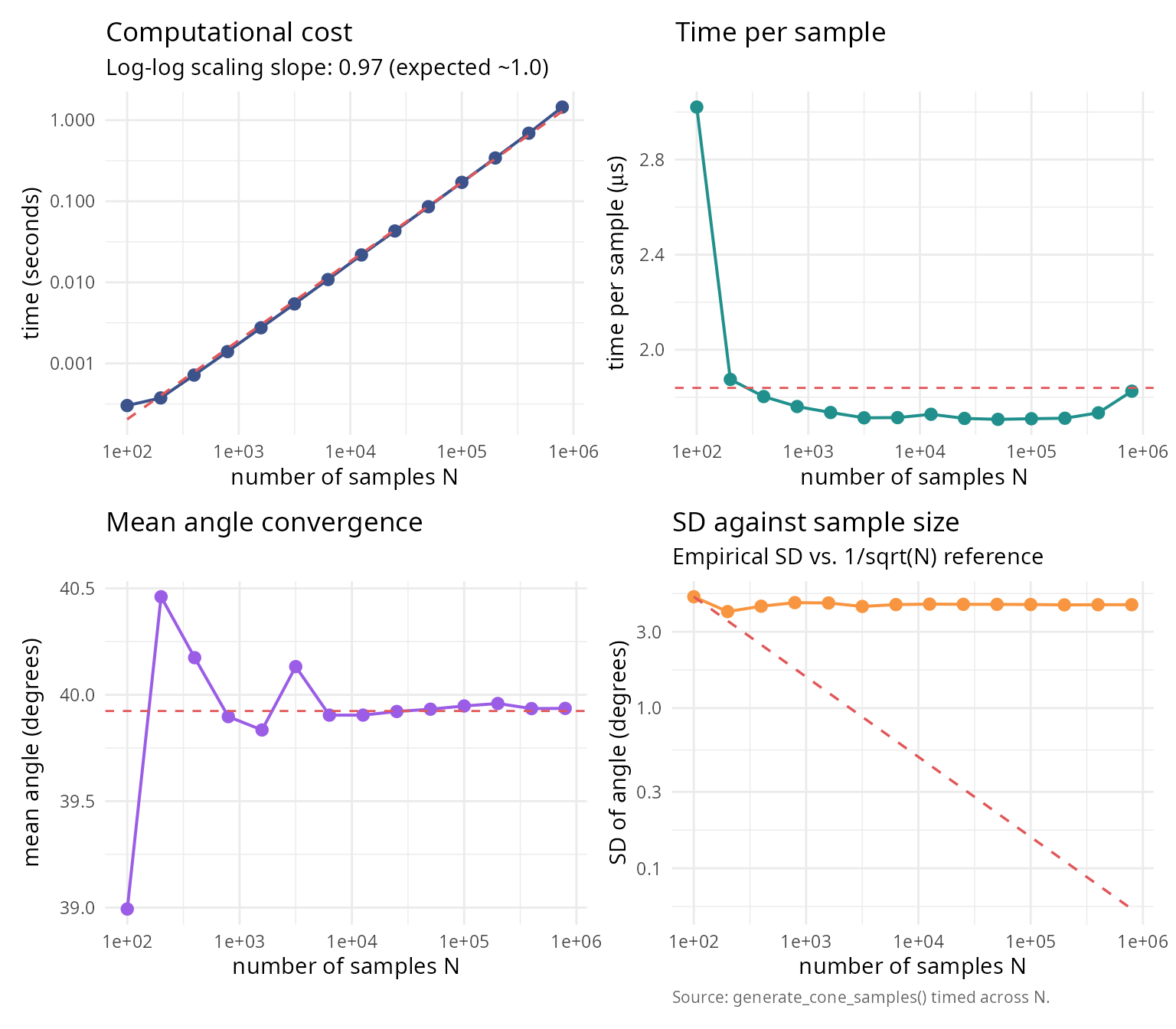

Performance and efficiency analysis

Computational cost vs accuracy

#### Analyze computational cost vs accuracy trade-off ####

n_perf <- 10

theta0_perf <- pi/4

sample_sizes_perf <- 10^seq(2, 6, by = 0.3)

mu_hat_perf <- c(rep(0, n_perf-1), 1)

#### True solid angle (for error computation) ####

omega_true_perf <- theta_to_omega(theta0_perf, n_perf)

results_perf <- data.frame(

n_samples = integer(),

time_seconds = numeric(),

mean_angle = numeric(),

sd_angle = numeric()

)

set.seed(42)

for (n_samp in round(sample_sizes_perf)) {

t_start <- Sys.time()

samples_perf <- generate_cone_samples(n_samp, mu_hat_perf, theta0_perf)

t_elapsed <- as.numeric(difftime(Sys.time(), t_start, units = "secs"))

#### Compute angle statistics ####

angles_perf <- acos(samples_perf[n_perf, ])

mean_angle <- mean(angles_perf)

sd_angle <- sd(angles_perf)

results_perf <- rbind(results_perf, data.frame(

n_samples = n_samp,

time_seconds = t_elapsed,

mean_angle = mean_angle,

sd_angle = sd_angle

))

}

knitr::kable(

results_perf,

digits = c(0, 4, 6, 6),

col.names = c("n samples", "time (s)", "mean angle (rad)", "sd angle")

)| n samples | time (s) | mean angle (rad) | sd angle |

|---|---|---|---|

| 100 | 0.0003 | 0.680558 | 0.086588 |

| 200 | 0.0004 | 0.706171 | 0.069970 |

| 398 | 0.0007 | 0.701174 | 0.075576 |

| 794 | 0.0014 | 0.696337 | 0.079566 |

| 1585 | 0.0028 | 0.695244 | 0.079190 |

| 3162 | 0.0054 | 0.700442 | 0.075322 |

| 6310 | 0.0108 | 0.696458 | 0.077366 |

| 12589 | 0.0218 | 0.696463 | 0.077894 |

| 25119 | 0.0430 | 0.696760 | 0.077665 |

| 50119 | 0.0856 | 0.696948 | 0.077626 |

| 100000 | 0.1710 | 0.697212 | 0.077450 |

| 199526 | 0.3416 | 0.697415 | 0.077037 |

| 398107 | 0.6906 | 0.696999 | 0.077222 |

| 794328 | 1.4502 | 0.697015 | 0.077219 |

library(ggplot2); library(patchwork)

fit_scaling <- lm(log10(time_seconds) ~ log10(n_samples), data = results_perf)

slope_scaling <- coef(fit_scaling)[2]

results_perf$pred_time <- 10^predict(fit_scaling)

results_perf$time_per_sample_us <- results_perf$time_seconds /

results_perf$n_samples * 1e6

results_perf$ref_sd <- results_perf$sd_angle[1] *

sqrt(results_perf$n_samples[1] / results_perf$n_samples)

theme_v <- theme_minimal(base_size = 11) +

theme(plot.caption = element_text(color = "grey40", size = 8, hjust = 0))

p_time <- ggplot(results_perf, aes(n_samples, time_seconds)) +

geom_line(colour = "#3B528B", linewidth = 0.7) +

geom_point(colour = "#3B528B", size = 2.4) +

geom_line(aes(y = pred_time), colour = "#E15759",

linetype = 2, linewidth = 0.6) +

scale_x_log10() + scale_y_log10() +

labs(title = "Computational cost",

subtitle = sprintf("Log-log scaling slope: %.2f (expected ~1.0)",

slope_scaling),

x = "number of samples N", y = "time (seconds)") + theme_v

p_per <- ggplot(results_perf, aes(n_samples, time_per_sample_us)) +

geom_line(colour = "#21908C", linewidth = 0.7) +

geom_point(colour = "#21908C", size = 2.4) +

geom_hline(yintercept = mean(results_perf$time_per_sample_us),

colour = "#E15759", linetype = 2, linewidth = 0.5) +

scale_x_log10() +

labs(title = "Time per sample",

x = "number of samples N",

y = expression(paste("time per sample (", mu, "s)"))) + theme_v

p_mean <- ggplot(results_perf, aes(n_samples, mean_angle * 180 / pi)) +

geom_line(colour = "#9B5DE5", linewidth = 0.7) +

geom_point(colour = "#9B5DE5", size = 2.4) +

geom_hline(yintercept = mean(results_perf$mean_angle) * 180 / pi,

colour = "#E15759", linetype = 2, linewidth = 0.5) +

scale_x_log10() +

labs(title = "Mean angle convergence",

x = "number of samples N", y = "mean angle (degrees)") + theme_v

p_sd <- ggplot(results_perf, aes(n_samples)) +

geom_line(aes(y = sd_angle * 180 / pi),

colour = "#F89540", linewidth = 0.7) +

geom_point(aes(y = sd_angle * 180 / pi),

colour = "#F89540", size = 2.4) +

geom_line(aes(y = ref_sd * 180 / pi),

colour = "#E15759", linetype = 2, linewidth = 0.6) +

scale_x_log10() + scale_y_log10() +

labs(title = "SD against sample size",

subtitle = "Empirical SD vs. 1/sqrt(N) reference",

x = "number of samples N",

y = "SD of angle (degrees)",

caption = "Source: generate_cone_samples() timed across N.") +

theme_v

(p_time + p_per) / (p_mean + p_sd)

perf_summary_table <- data.frame(

quantity = c("Log-log slope",

"Expected slope (O(N))",

"Mean time per sample (us)",

"Mean angle (deg)",

"Max angle (deg)"),

value = c(sprintf("%.3f", slope_scaling),

"1.000",

sprintf("%.2f", mean(results_perf$time_per_sample_us)),

sprintf("%.2f", mean(results_perf$mean_angle) * 180/pi),

sprintf("%.2f", theta0_perf * 180/pi))

)

knitr::kable(perf_summary_table, caption = "Scaling analysis for generate_cone_samples().")| quantity | value |

|---|---|

| Log-log slope | 0.974 |

| Expected slope (O(N)) | 1.000 |

| Mean time per sample (us) | 1.84 |

| Mean angle (deg) | 39.92 |

| Max angle (deg) | 45.00 |

Comparison with rejection sampling

Direct comparison of O(n) algorithm vs naive rejection.

#### Compare O(n) vs rejection sampling ####

dimensions_comp <- c(2, 3, 6)

theta0_comp_rej <- pi/12 # 15-degree cone (narrow)

n_samples_target <- 10000

comparison_results <- data.frame(

dimension = integer(),

time_on = numeric(),

time_rejection = numeric(),

speedup = numeric(),

expected_rejections = numeric()

)

set.seed(42)

for (n_comp_rej in dimensions_comp) {

mu_hat_comp_rej <- c(rep(0, n_comp_rej-1), 1)

#### O(n) algorithm ####

t_on <- system.time({

samples_on <- generate_cone_samples(n_samples_target, mu_hat_comp_rej,

theta0_comp_rej, method = "inverse")

})[3]

#### Rejection sampling ####

omega_comp <- theta_to_omega(theta0_comp_rej, n_comp_rej)

expected_total <- n_samples_target / omega_comp

t_rejection <- system.time({

samples_rej <- matrix(0, nrow = n_comp_rej, ncol = n_samples_target)

count <- 0

attempts <- 0

while (count < n_samples_target && attempts < expected_total * 10) {

#### Generate on full sphere ####

z <- rnorm(n_comp_rej)

candidate <- z / sqrt(sum(z^2))

#### Test if within cone ####

if (candidate[n_comp_rej] >= cos(theta0_comp_rej)) {

count <- count + 1

samples_rej[, count] <- candidate

}

attempts <- attempts + 1

}

})[3]

speedup <- t_rejection / t_on

comparison_results <- rbind(comparison_results, data.frame(

dimension = n_comp_rej,

time_on = t_on,

time_rejection = t_rejection,

speedup = speedup,

expected_rejections = expected_total

))

}

knitr::kable(

comparison_results,

digits = c(0, 4, 4, 1, 0),

col.names = c("dimension", "O(n) time", "rejection time",

"speedup", "expected samples")

)| dimension | O(n) time | rejection time | speedup | expected samples | |

|---|---|---|---|---|---|

| elapsed | 2 | 0.010 | 0.096 | 9.6 | 120000 |

| elapsed1 | 3 | 0.011 | 0.471 | 42.8 | 586955 |

| elapsed2 | 6 | 0.012 | 43.871 | 3655.9 | 49486516 |

library(ggplot2); library(patchwork)

theme_v <- theme_minimal(base_size = 11) +

theme(plot.caption = element_text(color = "grey40", size = 8, hjust = 0))

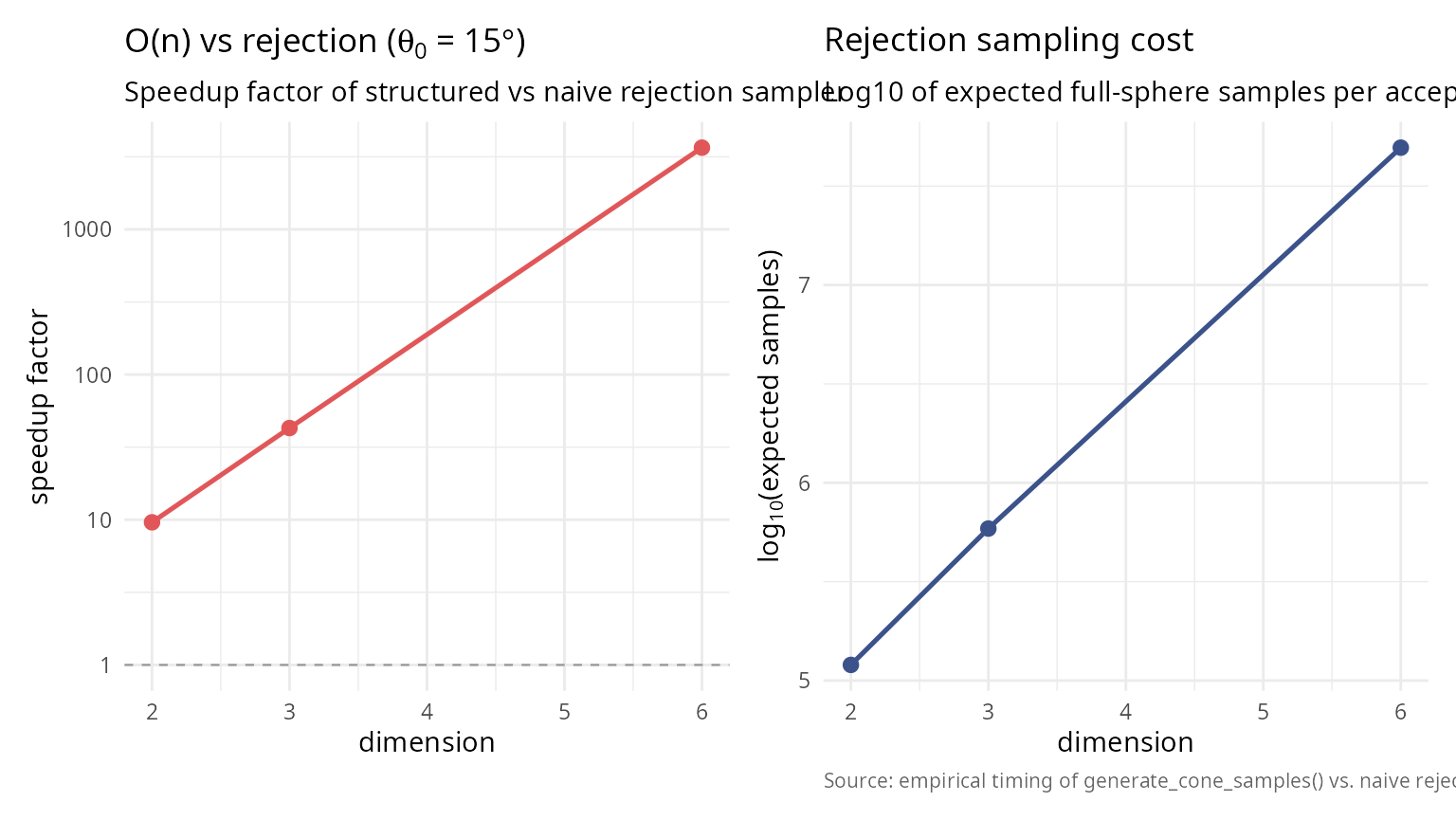

p_speed <- ggplot(comparison_results, aes(dimension, speedup)) +

geom_line(colour = "#E15759", linewidth = 0.9) +

geom_point(colour = "#E15759", size = 2.4) +

geom_hline(yintercept = 1, colour = "grey60",

linetype = 2, linewidth = 0.4) +

scale_y_log10() +

labs(title = bquote("O(n) vs rejection (" * theta[0] * " = " *

.(sprintf("%.0f", theta0_comp_rej * 180 / pi)) * degree * ")"),

subtitle = "Speedup factor of structured vs naive rejection sampler",

x = "dimension", y = "speedup factor") + theme_v

p_cost <- ggplot(comparison_results,

aes(dimension, log10(expected_rejections))) +

geom_line(colour = "#3B528B", linewidth = 0.9) +

geom_point(colour = "#3B528B", size = 2.4) +

labs(title = "Rejection sampling cost",

subtitle = "Log10 of expected full-sphere samples per accepted in-cap sample",

x = "dimension",

y = expression(log[10] * "(expected samples)"),

caption = "Source: empirical timing of generate_cone_samples() vs. naive rejection.") + theme_v

p_speed + p_cost

Summary and recommendations

Key results