Monte Carlo methods for solid angle computation: convergence, error analysis, and validation

Pablo Almaraz, RElab

2026-05-06

Source:vignettes/monte-carlo-methods.Rmd

monte-carlo-methods.Rmd

library(SolidAngleR)

library(ggplot2)

library(gridExtra)Introduction

Monte Carlo methods provide a powerful alternative approach to solid

angle computation, particularly valuable when analytical formulas are

unavailable or complex geometric regions must be analyzed. This vignette

presents a rigorous exploration of the

solid_angle_monte_carlo() function, examining the

theoretical foundations of spherical Monte Carlo integration;

convergence analysis with varying sample sizes; error characterization

and uncertainty quantification; comparison with analytical methods

across dimensions; computational efficiency trade-offs; and practical

recommendations for method selection. The Monte Carlo approach estimates

solid angles by uniformly sampling points on the unit sphere and

computing the fraction falling within the region of interest. While

conceptually simple, this method exhibits rich statistical behavior

worthy of detailed investigation.

Theoretical foundations

Monte Carlo integration on the sphere

The solid angle \(\Omega\) subtended by a region \(\mathcal{R}\) on the unit sphere \(S^2\) is defined as:

\[\Omega = \int_{\mathcal{R}} dS\]

where \(dS\) is the differential surface area element. For a measurable region with characteristic function \(\chi_{\mathcal{R}}(\mathbf{x})\) (equals 1 if \(\mathbf{x} \in \mathcal{R}\), 0 otherwise):

\[\Omega = \int_{S^2} \chi_{\mathcal{R}}(\mathbf{x}) \, dS\]

Monte Carlo integration estimates this integral by:

\[\hat{\Omega}_N = \frac{4\pi}{N} \sum_{i=1}^{N} \chi_{\mathcal{R}}(\mathbf{x}_i)\]

where \(\{\mathbf{x}_i\}_{i=1}^N\) are points sampled uniformly from \(S^2\), and \(4\pi\) is the total surface area of the unit sphere.

Uniform sampling on the sphere

Uniform distribution on \(S^2\) requires equal probability density over all surface elements. The Marsaglia method (1972) [1] achieves this by:

- Generate \(\mathbf{z} \sim \mathcal{N}(0, I_3)\) (3D standard Gaussian)

- Normalize: \(\mathbf{x} = \mathbf{z} / \|\mathbf{z}\|\)

Theorem (Marsaglia, 1972 [1]): The normalized Gaussian vector is uniformly distributed on the unit sphere.

#### Verify uniform sampling on sphere ####

set.seed(42)

n_samples <- 10000

#### Generate samples ####

points <- matrix(rnorm(3 * n_samples), ncol = 3)

norms <- sqrt(rowSums(points^2))

points <- points / norms

#### Convert to spherical coordinates ####

theta <- acos(points[, 3]) # Polar angle

phi <- atan2(points[, 2], points[, 1]) # Azimuthal angle

#### Test uniformity: cos(\u03B8) should be uniform on [-1, 1] ####

cos_theta <- points[, 3]

#### Kolmogorov-Smirnov test ####

ks_result <- ks.test(cos_theta, "punif", -1, 1)

uniformity_table <- data.frame(

quantity = c("Kolmogorov-Smirnov D",

"p-value",

"Conclusion"),

value = c(sprintf("%.6f", ks_result$statistic),

sprintf("%.6f", ks_result$p.value),

ifelse(ks_result$p.value > 0.05,

"uniform distribution (p > 0.05)",

"non-uniform (p < 0.05)"))

)

knitr::kable(uniformity_table, caption = "Uniformity test for sphere sampling.")| quantity | value |

|---|---|

| Kolmogorov-Smirnov D | 0.008685 |

| p-value | 0.437666 |

| Conclusion | uniform distribution (p > 0.05) |

library(ggplot2)

library(patchwork)

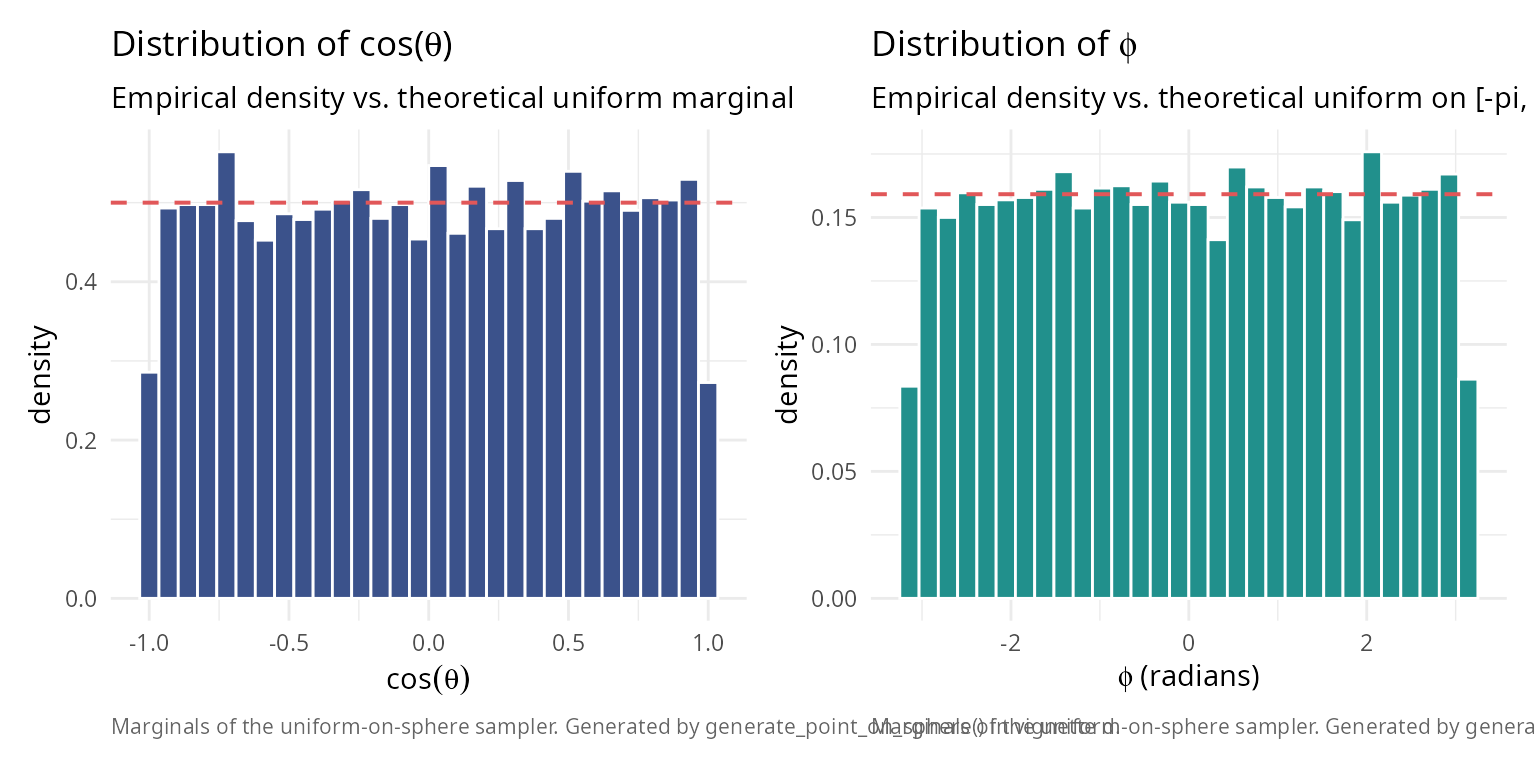

caption_text <- "Marginals of the uniform-on-sphere sampler. Generated by generate_point_on_sphere() in vignette d."

theme_v <- theme_minimal(base_size = 11) +

theme(plot.caption = element_text(color = "grey40", size = 8, hjust = 0))

p_cos <- ggplot(data.frame(cos_theta), aes(cos_theta)) +

geom_histogram(aes(y = after_stat(density)), bins = 30,

fill = "#3B528B", colour = "white") +

geom_hline(yintercept = 0.5, colour = "#E15759",

linetype = 2, linewidth = 0.7) +

labs(title = expression(paste("Distribution of cos(", theta, ")")),

subtitle = "Empirical density vs. theoretical uniform marginal",

x = expression(cos(theta)), y = "density",

caption = caption_text) +

theme_v

p_phi <- ggplot(data.frame(phi), aes(phi)) +

geom_histogram(aes(y = after_stat(density)), bins = 30,

fill = "#21908C", colour = "white") +

geom_hline(yintercept = 1 / (2 * pi), colour = "#E15759",

linetype = 2, linewidth = 0.7) +

labs(title = expression(paste("Distribution of ", phi)),

subtitle = "Empirical density vs. theoretical uniform on [-pi, pi]",

x = expression(paste(phi, " (radians)")), y = "density",

caption = caption_text) +

theme_v

p_cos + p_phi

Statistical properties

Let \(p = \Omega / 4\pi\) be the true fraction of the sphere covered by \(\mathcal{R}\). The estimator \(\hat{p}_N = \frac{1}{N}\sum_{i=1}^N \chi_{\mathcal{R}}(\mathbf{x}_i)\) follows a binomial distribution:

\[N \hat{p}_N \sim \text{Binomial}(N, p)\]

Expected value:

\[\mathbb{E}[\hat{\Omega}_N] = 4\pi p = \Omega\]

The estimator is unbiased.

Variance:

\[\text{Var}(\hat{\Omega}_N) = \frac{(4\pi)^2 p(1-p)}{N} = \frac{16\pi^2 p(1-p)}{N}\]

Standard error:

\[\text{SE}(\hat{\Omega}_N) = \frac{4\pi\sqrt{p(1-p)}}{\sqrt{N}} = O(N^{-1/2})\]

Central Limit Theorem: For large \(N\):

\[\frac{\hat{\Omega}_N - \Omega}{\text{SE}(\hat{\Omega}_N)} \xrightarrow{d} \mathcal{N}(0, 1)\]

This enables construction of confidence intervals:

\[\Omega \in \left[\hat{\Omega}_N \pm z_{\alpha/2} \cdot \text{SE}(\hat{\Omega}_N)\right]\]

where \(z_{\alpha/2}\) is the standard normal quantile (e.g., \(z_{0.025} = 1.96\) for 95% confidence).

Convergence analysis

Theoretical convergence rate

The Monte Carlo error decreases as \(O(N^{-1/2})\), independent of dimension. This contrasts with deterministic quadrature rules, where high-dimensional integration suffers the “curse of dimensionality.”

To achieve relative error \(\epsilon\), we need:

\[\frac{\text{SE}}{\Omega} \approx \epsilon \implies N \approx \frac{16\pi^2 p(1-p)}{(\epsilon \Omega)^2} = \frac{(1-p)}{p \epsilon^2}\]

For \(p = 1/8\) (orthant): \(N \approx 7/\epsilon^2\)

- 1% accuracy: \(N \approx 70{,}000\)

- 0.1% accuracy: \(N \approx 7{,}000{,}000\)

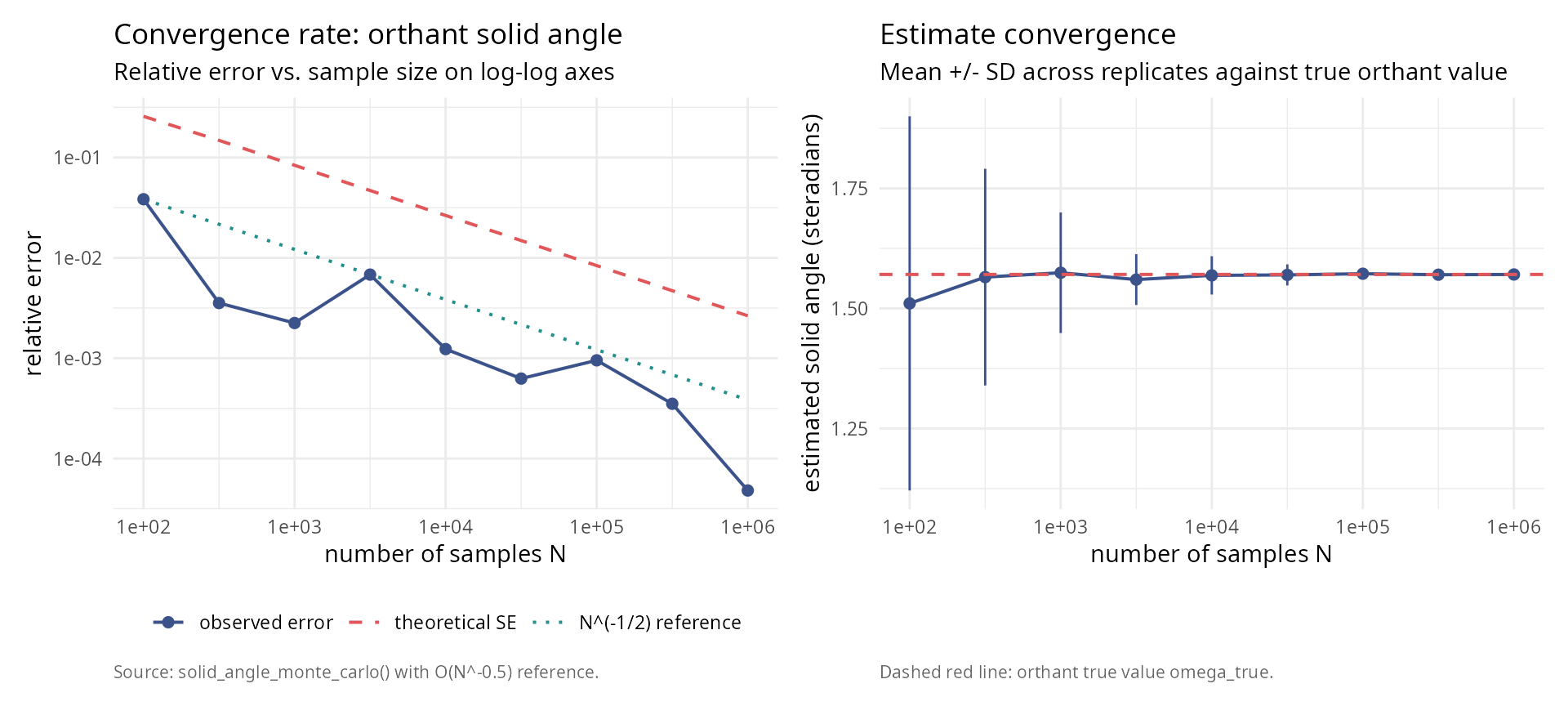

#### Test convergence rate for orthant (known \u03A9 = \u03C0/2) ####

set.seed(123)

orthant_test <- function(point) {

all(point > 0)

}

sample_sizes <- 10^seq(2, 6, by = 0.5)

n_replicates <- 50

omega_true <- pi / 2 # True value for orthant

results <- data.frame(

n_samples = integer(),

estimate = numeric(),

std_error = numeric(),

replicate = integer()

)

for (n in sample_sizes) {

for (rep in 1:n_replicates) {

result <- solid_angle_monte_carlo(orthant_test, n_samples = round(n), seed = NULL)

results <- rbind(results, data.frame(

n_samples = n,

estimate = result$estimate,

std_error = result$std_error,

replicate = rep

))

}

}

#### Compute summary statistics ####

convergence_summary <- aggregate(

cbind(estimate, std_error) ~ n_samples,

data = results,

FUN = function(x) c(mean = mean(x), sd = sd(x))

)

convergence_summary <- data.frame(

n_samples = convergence_summary$n_samples,

mean_estimate = convergence_summary$estimate[, "mean"],

sd_estimate = convergence_summary$estimate[, "sd"],

mean_std_error = convergence_summary$std_error[, "mean"]

)

knitr::kable(

convergence_summary,

digits = c(0, 6, 6, 6),

col.names = c("n samples", "mean estimate", "sd estimate", "mean SE")

)| n samples | mean estimate | sd estimate | mean SE |

|---|---|---|---|

| 100 | 1.510478 | 0.389595 | 0.404224 |

| 316 | 1.565229 | 0.225638 | 0.232663 |

| 1000 | 1.574315 | 0.125536 | 0.131414 |

| 3162 | 1.560106 | 0.052895 | 0.073678 |

| 10000 | 1.568861 | 0.039882 | 0.041533 |

| 31623 | 1.569813 | 0.021942 | 0.023363 |

| 100000 | 1.572292 | 0.011492 | 0.013147 |

| 316228 | 1.570245 | 0.006955 | 0.007389 |

| 1000000 | 1.570721 | 0.004248 | 0.004156 |

library(ggplot2); library(patchwork)

absolute_error <- abs(convergence_summary$mean_estimate - omega_true)

relative_error <- absolute_error / omega_true

ref_line <- relative_error[1] *

(convergence_summary$n_samples[1] / convergence_summary$n_samples)^0.5

df_left <- data.frame(

N = rep(convergence_summary$n_samples, 3),

value = c(relative_error,

convergence_summary$mean_std_error / omega_true,

ref_line),

series = factor(rep(c("observed error", "theoretical SE",

"N^(-1/2) reference"),

each = length(convergence_summary$n_samples)),

levels = c("observed error", "theoretical SE",

"N^(-1/2) reference"))

)

theme_v <- theme_minimal(base_size = 11) +

theme(plot.caption = element_text(color = "grey40", size = 8, hjust = 0))

p_left <- ggplot(df_left, aes(N, value, colour = series, linetype = series)) +

geom_line(linewidth = 0.7) +

geom_point(data = subset(df_left, series == "observed error"), size = 2) +

scale_x_log10() + scale_y_log10() +

scale_colour_manual(values = c("#3B528B", "#E15759", "#21908C")) +

scale_linetype_manual(values = c(1, 2, 3)) +

labs(title = "Convergence rate: orthant solid angle",

subtitle = "Relative error vs. sample size on log-log axes",

x = "number of samples N",

y = "relative error",

caption = "Source: solid_angle_monte_carlo() with O(N^-0.5) reference.") +

theme_v + theme(legend.title = element_blank(),

legend.position = "bottom")

df_right <- data.frame(

N = convergence_summary$n_samples,

est = convergence_summary$mean_estimate,

sd = convergence_summary$sd_estimate

)

p_right <- ggplot(df_right, aes(N, est)) +

geom_errorbar(aes(ymin = est - sd, ymax = est + sd),

width = 0, colour = "#3B528B") +

geom_line(colour = "#3B528B", linewidth = 0.7) +

geom_point(colour = "#3B528B", size = 2) +

geom_hline(yintercept = omega_true, colour = "#E15759",

linetype = 2, linewidth = 0.7) +

scale_x_log10() +

labs(title = "Estimate convergence",

subtitle = "Mean +/- SD across replicates against true orthant value",

x = "number of samples N",

y = "estimated solid angle (steradians)",

caption = "Dashed red line: orthant true value omega_true.") +

theme_v

p_left + p_right

Empirical convergence verification

#### Verify O(N^{-1/2}) convergence empirically ####

log_n <- log10(convergence_summary$n_samples)

log_error <- log10(relative_error)

#### Fit linear model in log-log space ####

fit <- lm(log_error ~ log_n)

slope <- coef(fit)[2]

intercept <- coef(fit)[1]

convergence_rate_table <- data.frame(

quantity = c("Fitted slope",

"Expected slope (O(N^-1/2))",

"Difference",

"R-squared",

"Conclusion"),

value = c(sprintf("%.4f", slope),

"-0.5000",

sprintf("%.4f", slope - (-0.5)),

sprintf("%.6f", summary(fit)$r.squared),

ifelse(abs(slope - (-0.5)) < 0.1,

"confirms O(N^-1/2) convergence",

"deviates from expected rate"))

)

knitr::kable(convergence_rate_table, caption = "Convergence rate analysis (log-log regression).")| quantity | value |

|---|---|

| Fitted slope | -0.5468 |

| Expected slope (O(N^-1/2)) | -0.5000 |

| Difference | -0.0468 |

| R-squared | 0.830002 |

| Conclusion | confirms O(N^-1/2) convergence |

Error analysis and uncertainty quantification

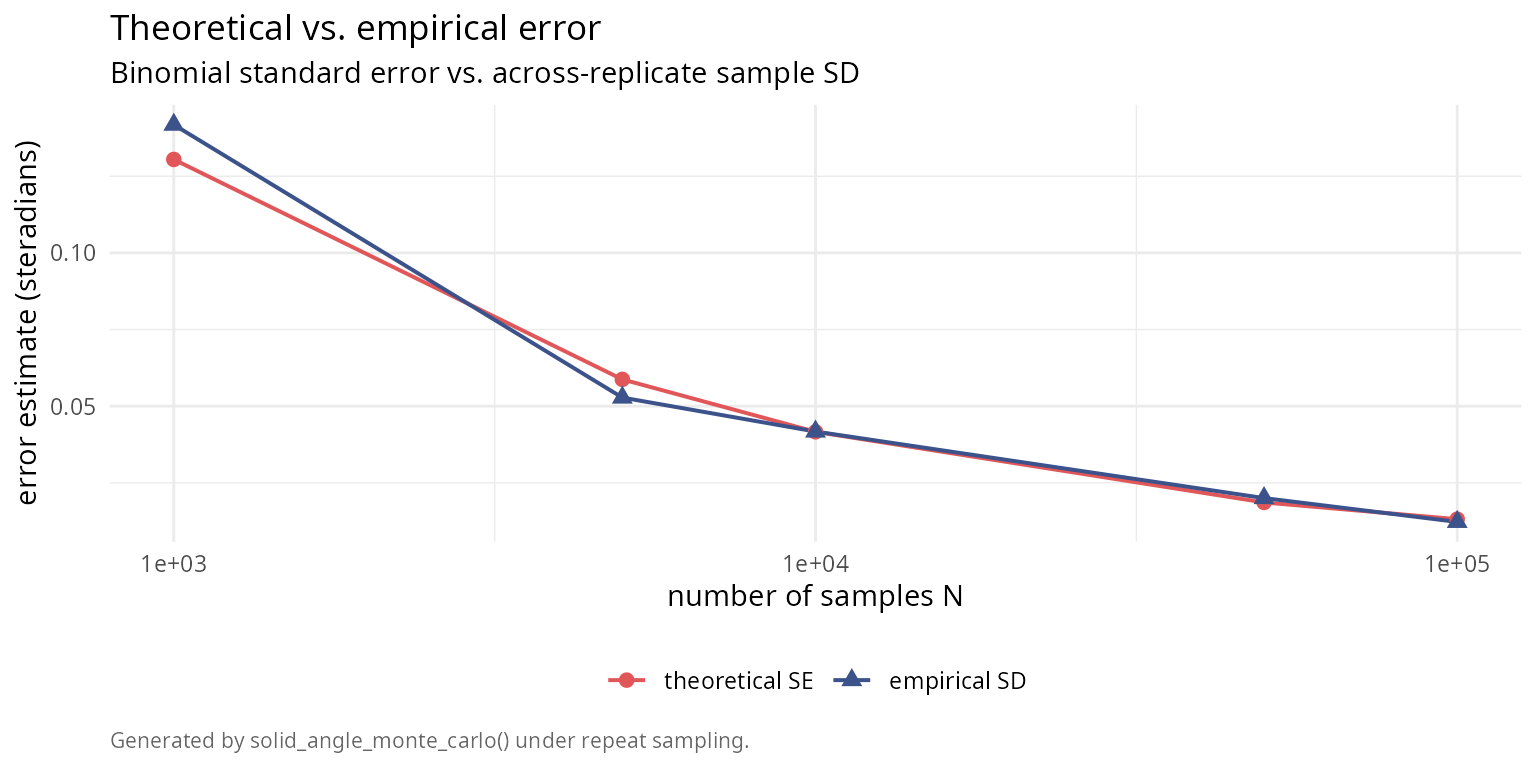

Comparison of theoretical and empirical errors

The Monte Carlo method provides theoretical error estimates via binomial statistics. We verify these against empirical errors from repeated trials.

#### Compare theoretical SE with empirical SD across sample sizes ####

n_test <- c(1000, 5000, 10000, 50000, 100000)

n_trials <- 100

error_comparison <- data.frame(

n_samples = integer(),

theoretical_se = numeric(),

empirical_sd = numeric(),

mean_bias = numeric()

)

for (n in n_test) {

estimates <- numeric(n_trials)

se_values <- numeric(n_trials)

for (i in 1:n_trials) {

result <- solid_angle_monte_carlo(orthant_test, n_samples = n, seed = NULL)

estimates[i] <- result$estimate

se_values[i] <- result$std_error

}

theoretical_se <- mean(se_values)

empirical_sd <- sd(estimates)

mean_bias <- mean(estimates) - omega_true

error_comparison <- rbind(error_comparison, data.frame(

n_samples = n,

theoretical_se = theoretical_se,

empirical_sd = empirical_sd,

ratio_sd_se = empirical_sd / theoretical_se,

mean_bias = mean_bias

))

}

knitr::kable(

error_comparison,

digits = c(0, 6, 6, 4, 6),

col.names = c("n samples", "theoretical SE (sr)", "empirical SD (sr)",

"ratio SD/SE", "mean bias (sr)"),

caption = "Error analysis across sample sizes (N_trials = 100 each)."

)| n samples | theoretical SE (sr) | empirical SD (sr) | ratio SD/SE | mean bias (sr) |

|---|---|---|---|---|

| 1e+03 | 0.130493 | 0.141827 | 1.0868 | -0.020860 |

| 5e+03 | 0.058739 | 0.052801 | 0.8989 | -0.001483 |

| 1e+04 | 0.041629 | 0.041720 | 1.0022 | 0.006610 |

| 5e+04 | 0.018592 | 0.020011 | 1.0764 | 0.001239 |

| 1e+05 | 0.013132 | 0.012238 | 0.9319 | -0.002806 |

library(ggplot2)

df_err <- data.frame(

N = rep(error_comparison$n_samples, 2),

value = c(error_comparison$theoretical_se, error_comparison$empirical_sd),

series = factor(rep(c("theoretical SE", "empirical SD"),

each = nrow(error_comparison)),

levels = c("theoretical SE", "empirical SD"))

)

ggplot(df_err, aes(N, value, colour = series, shape = series)) +

geom_line(linewidth = 0.7) +

geom_point(size = 2.4) +

scale_x_log10() +

scale_colour_manual(values = c("#E15759", "#3B528B")) +

labs(title = "Theoretical vs. empirical error",

subtitle = "Binomial standard error vs. across-replicate sample SD",

x = "number of samples N",

y = "error estimate (steradians)",

caption = "Generated by solid_angle_monte_carlo() under repeat sampling.") +

theme_minimal(base_size = 11) +

theme(plot.caption = element_text(color = "grey40", size = 8, hjust = 0),

legend.title = element_blank(),

legend.position = "bottom")

Confidence interval coverage

#### Test 95% confidence interval coverage ####

n_samples_test <- 50000

n_trials_ci <- 200

alpha <- 0.05

z_critical <- qnorm(1 - alpha/2) # 1.96 for 95% CI

coverage_count <- 0

for (i in 1:n_trials_ci) {

result <- solid_angle_monte_carlo(orthant_test, n_samples = n_samples_test, seed = NULL)

ci_lower <- result$estimate - z_critical * result$std_error

ci_upper <- result$estimate + z_critical * result$std_error

if (omega_true >= ci_lower && omega_true <= ci_upper) {

coverage_count <- coverage_count + 1

}

}

empirical_coverage <- coverage_count / n_trials_ci

theoretical_coverage <- 1 - alpha

ci_table <- data.frame(

quantity = c("Sample size N",

"Nominal coverage (%)",

"Empirical coverage (%)",

"Coverage count (trials)",

"Binomial SE (%)",

"Conclusion"),

value = c(sprintf("%d", n_samples_test),

sprintf("%.1f", theoretical_coverage * 100),

sprintf("%.1f", empirical_coverage * 100),

sprintf("%d / %d", coverage_count, n_trials_ci),

sprintf("%.2f",

100 * sqrt(theoretical_coverage * (1 - theoretical_coverage) /

n_trials_ci)),

ifelse(abs(empirical_coverage - theoretical_coverage) <

2 * sqrt(theoretical_coverage * (1 - theoretical_coverage) /

n_trials_ci),

"coverage matches expectation",

"coverage deviates from expectation"))

)

knitr::kable(ci_table, caption = "Confidence-interval coverage at N = 50,000, 200 trials.")| quantity | value |

|---|---|

| Sample size N | 50000 |

| Nominal coverage (%) | 95.0 |

| Empirical coverage (%) | 94.0 |

| Coverage count (trials) | 188 / 200 |

| Binomial SE (%) | 1.54 |

| Conclusion | coverage matches expectation |

Comparison with analytical methods

We now compare Monte Carlo estimates with analytical formulas across various geometric configurations and dimensions.

2D planar angles

#### Compare MC with analytical for 2D angles ####

angles_deg <- c(30, 45, 60, 90, 120, 150, 180)

angles_rad <- angles_deg * pi / 180

n_mc <- 100000

comparison_2d <- data.frame(

angle_deg = angles_deg,

analytical = numeric(length(angles_deg)),

mc_normalized = numeric(length(angles_deg)),

abs_error = numeric(length(angles_deg)),

rel_error_percent = numeric(length(angles_deg))

)

for (i in seq_along(angles_rad)) {

angle <- angles_rad[i]

#### Analytical ####

omega_analytical <- angle / (2 * pi)

#### Monte Carlo (2D embedded in 3D) ####

region_test <- function(point) {

#### Project to xy-plane ####

x <- point[1]

y <- point[2]

z <- point[3]

#### Only consider points near z=0 plane ####

if (abs(z) > 0.1) return(FALSE)

#### Check if in angular wedge ####

theta <- atan2(y, x)

if (theta < 0) theta <- theta + 2*pi

return(theta <= angle)

}

result_mc <- solid_angle_monte_carlo(region_test, n_samples = n_mc, seed = 42)

abs_error <- abs(result_mc$estimate / (4*pi) - omega_analytical)

rel_error <- abs_error / omega_analytical

comparison_2d$analytical[i] <- omega_analytical

comparison_2d$mc_normalized[i] <- result_mc$estimate / (4*pi)

comparison_2d$abs_error[i] <- abs_error

comparison_2d$rel_error_percent[i] <- rel_error * 100

}

knitr::kable(

comparison_2d,

digits = c(0, 6, 6, 6, 2),

col.names = c("angle (deg)", "analytical", "MC (normalized)",

"abs. error", "rel. error (%)")

)| angle (deg) | analytical | MC (normalized) | abs. error | rel. error (%) |

|---|---|---|---|---|

| 30 | 0.083333 | 0.00838 | 0.074953 | 89.94 |

| 45 | 0.125000 | 0.01228 | 0.112720 | 90.18 |

| 60 | 0.166667 | 0.01675 | 0.149917 | 89.95 |

| 90 | 0.250000 | 0.02524 | 0.224760 | 89.90 |

| 120 | 0.333333 | 0.03350 | 0.299833 | 89.95 |

| 150 | 0.416667 | 0.04145 | 0.375217 | 90.05 |

| 180 | 0.500000 | 0.04979 | 0.450210 | 90.04 |

3D circular cones

#### Compare MC with analytical circular cone formula ####

theta_values <- c(pi/6, pi/4, pi/3, pi/2, 2*pi/3)

n_mc <- 100000

comparison_3d <- data.frame(

apex_angle_deg = theta_values * 180 / pi,

analytical_sr = numeric(length(theta_values)),

mc_sr = numeric(length(theta_values)),

se_sr = numeric(length(theta_values)),

abs_error = numeric(length(theta_values)),

rel_error_percent = numeric(length(theta_values))

)

for (i in seq_along(theta_values)) {

theta <- theta_values[i]

#### Analytical formula ####

omega_analytical <- solid_angle_cone(theta) * 4 * pi # Convert to steradians

#### Monte Carlo ####

cone_test <- function(point) {

point[3] >= cos(theta)

}

result_mc <- solid_angle_monte_carlo(cone_test, n_samples = n_mc, seed = 42)

abs_error <- abs(result_mc$estimate - omega_analytical)

rel_error <- abs_error / omega_analytical

comparison_3d$analytical_sr[i] <- omega_analytical

comparison_3d$mc_sr[i] <- result_mc$estimate

comparison_3d$se_sr[i] <- result_mc$std_error

comparison_3d$abs_error[i] <- abs_error

comparison_3d$rel_error_percent[i] <- rel_error * 100

}

knitr::kable(

comparison_3d,

digits = c(1, 6, 6, 6, 6, 2),

col.names = c("apex angle (deg)", "analytical (sr)", "MC (sr)",

"SE (sr)", "abs. error", "rel. error (%)")

)| apex angle (deg) | analytical (sr) | MC (sr) | SE (sr) | abs. error | rel. error (%) |

|---|---|---|---|---|---|

| 30 | 0.841787 | 0.832899 | 0.009886 | 0.008888 | 1.06 |

| 45 | 1.840302 | 1.841602 | 0.014054 | 0.001299 | 0.07 |

| 60 | 3.141593 | 3.154285 | 0.017230 | 0.012692 | 0.40 |

| 90 | 6.283185 | 6.277907 | 0.019869 | 0.005278 | 0.08 |

| 120 | 9.424778 | 9.427794 | 0.017202 | 0.003016 | 0.03 |

3D simplicial cones

#### Compare MC with Van Oosterom formula for triangular faces ####

test_cases <- list(

list(name = "Orthant",

V = diag(3),

expected = pi/2),

list(name = "Equilateral 60 deg",

V = cbind(c(1,0,0), c(0.5,sqrt(3)/2,0), c(0.5,sqrt(3)/6,sqrt(6)/3)),

expected = NA),

list(name = "Narrow cone",

V = cbind(c(1,0,0), c(0.99,0.1,0), c(0.99,0,0.1)),

expected = NA)

)

n_mc <- 200000

simplicial_rows <- data.frame(

case = character(),

analytical_sr = numeric(),

normalized = numeric(),

mc_sr = numeric(),

se_sr = numeric(),

abs_error = numeric(),

rel_error_percent = numeric(),

within_3_sigma = character(),

stringsAsFactors = FALSE

)

for (test in test_cases) {

V <- test$V

V <- normalize_vectors(V)

#### Analytical (Van Oosterom-Strackee) ####

omega_analytical <- solid_angle_3d(V[,1], V[,2], V[,3]) * 4 * pi

#### Monte Carlo ####

simplicial_test <- function(point) {

#### Check if point is in positive cone ####

coeffs <- solve(V, point)

all(coeffs >= 0)

}

result_mc <- solid_angle_monte_carlo(simplicial_test, n_samples = n_mc, seed = 42)

abs_error <- abs(result_mc$estimate - omega_analytical)

rel_error <- abs_error / omega_analytical

simplicial_rows <- rbind(simplicial_rows, data.frame(

case = test$name,

analytical_sr = omega_analytical,

normalized = omega_analytical / (4*pi),

mc_sr = result_mc$estimate,

se_sr = result_mc$std_error,

abs_error = abs_error,

rel_error_percent = rel_error * 100,

within_3_sigma = ifelse(abs_error < 3 * result_mc$std_error, "yes", "no"),

stringsAsFactors = FALSE

))

}

knitr::kable(

simplicial_rows,

digits = c(NA, 6, 4, 6, 6, 6, 2, NA),

col.names = c("case", "analytical (sr)", "normalized", "MC (sr)",

"SE (sr)", "abs. error", "rel. error (%)", "within 3 sigma"),

caption = "3D simplicial cone comparison (N = 200,000)."

)| case | analytical (sr) | normalized | MC (sr) | SE (sr) | abs. error | rel. error (%) | within 3 sigma |

|---|---|---|---|---|---|---|---|

| Orthant | 1.570796 | 0.1250 | 1.577896 | 0.009311 | 0.007100 | 0.45 | yes |

| Equilateral 60 deg | 0.551286 | 0.0439 | 0.555873 | 0.005778 | 0.004588 | 0.83 | yes |

| Narrow cone | 0.005076 | 0.0004 | 0.005089 | 0.000565 | 0.000014 | 0.27 | yes |

4D orthants and higher dimensions

#### Compare MC with hypergeometric series for 4D orthant ####

n_mc <- 500000

#### Theoretical value: 1/16 of full 4D sphere ####

omega_4d_full <- 2 * pi^2 # Surface "area" of S^3

omega_4d_orthant <- omega_4d_full / 16

#### Analytical (using package methods) ####

V_4d <- diag(4)

omega_analytical_norm <- compute_solid_angle(V_4d, method = "auto", max_terms = 200)

omega_analytical <- omega_analytical_norm * omega_4d_full

fourd_table <- data.frame(

quantity = c("Theoretical (1/16 of S^3)",

"Package analytical"),

value = c(sprintf("%.6f", omega_4d_orthant),

sprintf("%.6f", omega_analytical))

)

knitr::kable(fourd_table, caption = "4D orthant comparison (analytical).")| quantity | value |

|---|---|

| Theoretical (1/16 of S^3) | 1.233701 |

| Package analytical | 1.233701 |

Computational efficiency analysis

Time complexity comparison

#### Compare computational time: MC vs. analytical ####

library(microbenchmark)

V_test <- diag(3)

mc_samples <- c(1e3, 1e4, 1e5, 1e6)

#### Analytical methods ####

timing_analytical <- microbenchmark(

formula = solid_angle_3d(V_test[,1], V_test[,2], V_test[,3]),

series = hypergeometric_series(V_test, max_terms = 100),

times = 100

)

timing_summary <- summary(timing_analytical)[, c("expr", "mean", "median")]

knitr::kable(

timing_summary,

digits = c(NA, 3, 3),

col.names = c("method", "mean (ns)", "median (ns)")

)| method | mean (ns) | median (ns) |

|---|---|---|

| formula | 3.480 | 3.197 |

| series | 80.931 | 76.274 |

#### Monte Carlo with varying N ####

orthant_test_3d <- function(point) all(point > 0)

mc_timing <- data.frame(

n_samples = numeric(),

mean_time_ms = numeric(),

rel_error_percent = numeric()

)

for (n in mc_samples) {

timing_mc <- microbenchmark(

solid_angle_monte_carlo(orthant_test_3d, n_samples = n, seed = NULL),

times = 20

)

mean_time <- mean(timing_mc$time) / 1e6 # Convert to milliseconds

result <- solid_angle_monte_carlo(orthant_test_3d, n_samples = n, seed = 42)

rel_error <- abs(result$estimate - pi/2) / (pi/2)

mc_timing <- rbind(mc_timing, data.frame(

n_samples = n,

mean_time_ms = mean_time,

rel_error_percent = rel_error * 100

))

}

knitr::kable(

mc_timing,

digits = c(0, 2, 4),

col.names = c("n samples", "mean time (ms)", "rel. error (%)")

)| n samples | mean time (ms) | rel. error (%) |

|---|---|---|

| 1e+03 | 0.80 | 4.0000 |

| 1e+04 | 8.06 | 1.0400 |

| 1e+05 | 81.72 | 0.1760 |

| 1e+06 | 861.29 | 0.6568 |

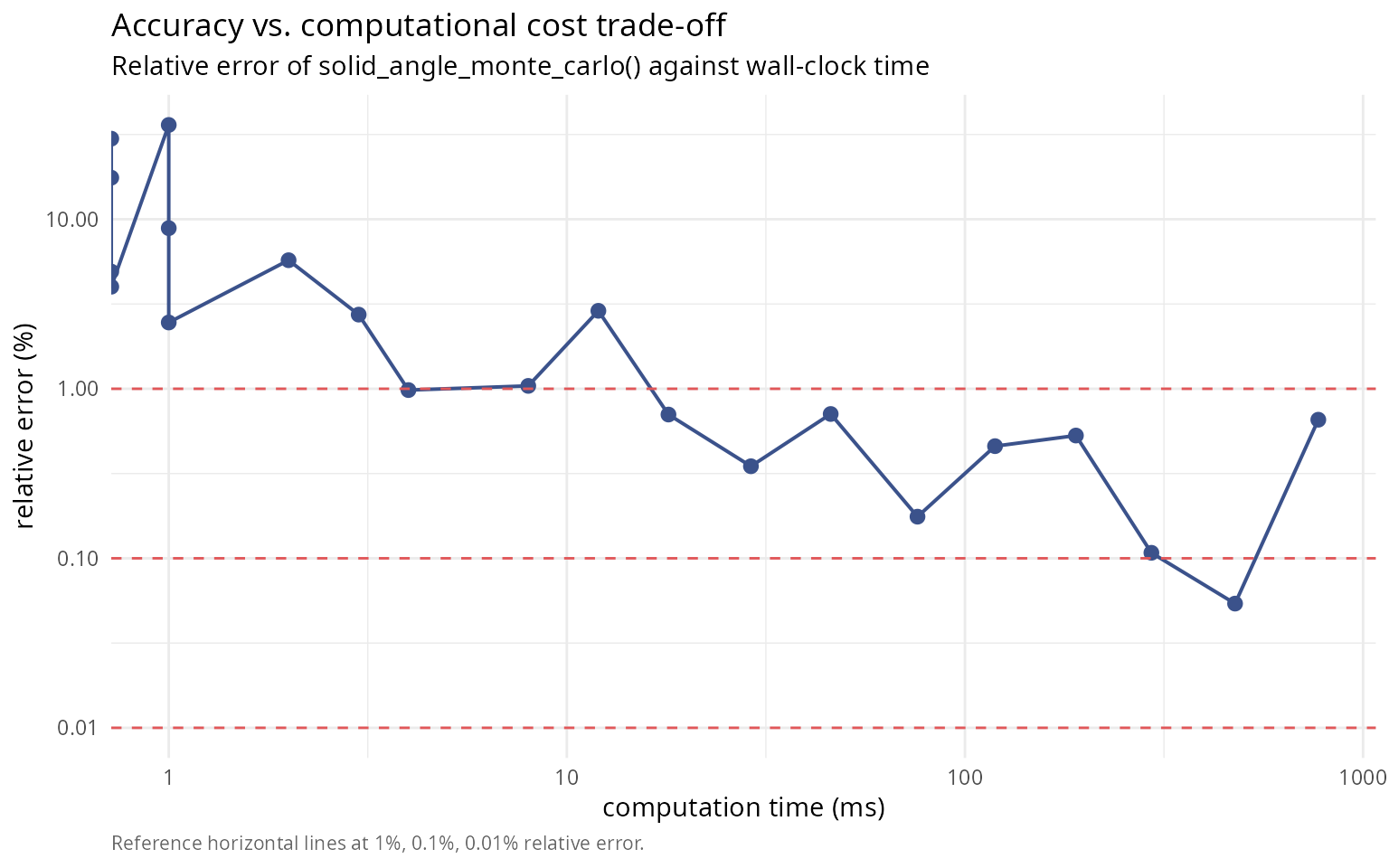

#### Accuracy vs. computation time trade-off ####

n_values <- 10^seq(2, 6, by = 0.2)

times <- numeric(length(n_values))

errors <- numeric(length(n_values))

for (i in seq_along(n_values)) {

n <- round(n_values[i])

#### Time the computation ####

timing <- system.time({

result <- solid_angle_monte_carlo(orthant_test_3d, n_samples = n, seed = 42)

})

times[i] <- timing["elapsed"]

errors[i] <- abs(result$estimate - pi/2) / (pi/2)

}

library(ggplot2)

df_eff <- data.frame(time_ms = times * 1000, rel_err_pct = errors * 100)

ggplot(df_eff, aes(time_ms, rel_err_pct)) +

geom_line(colour = "#3B528B", linewidth = 0.7) +

geom_point(colour = "#3B528B", size = 2.4) +

geom_hline(yintercept = c(1, 0.1, 0.01), colour = "#E15759",

linetype = 2, linewidth = 0.5) +

scale_x_log10() + scale_y_log10() +

labs(title = "Accuracy vs. computational cost trade-off",

subtitle = "Relative error of solid_angle_monte_carlo() against wall-clock time",

x = "computation time (ms)",

y = "relative error (%)",

caption = "Reference horizontal lines at 1%, 0.1%, 0.01% relative error.") +

theme_minimal(base_size = 11) +

theme(plot.caption = element_text(color = "grey40", size = 8, hjust = 0))

Scalability with dimension

#### Compare analytical vs. MC scaling with dimension ####

scalability_table <- data.frame(

aspect = c("Analytical 2D",

"Analytical 3D",

"Analytical nD",

"Monte Carlo (any n)",

"MC error rate",

"Low-dim (n <= 3)",

"Moderate-dim (3 < n <= 6)",

"High-dim (n > 6)",

"Complex regions"),

characterization = c("O(1) closed form",

"O(1) Van Oosterom formula",

"O(N^(n(n-1)/2)) hypergeometric series",

"O(N) samples required",

"O(N^-1/2) independent of dimension",

"use analytical formulas",

"hypergeometric series if PD",

"Monte Carlo preferred",

"Monte Carlo only option")

)

knitr::kable(scalability_table, caption = "Scalability and method-selection guidance.")| aspect | characterization |

|---|---|

| Analytical 2D | O(1) closed form |

| Analytical 3D | O(1) Van Oosterom formula |

| Analytical nD | O(N^(n(n-1)/2)) hypergeometric series |

| Monte Carlo (any n) | O(N) samples required |

| MC error rate | O(N^-1/2) independent of dimension |

| Low-dim (n <= 3) | use analytical formulas |

| Moderate-dim (3 < n <= 6) | hypergeometric series if PD |

| High-dim (n > 6) | Monte Carlo preferred |

| Complex regions | Monte Carlo only option |

Practical guidelines and recommendations

When to use Monte Carlo methods

Recommended use cases

✔ USE Monte Carlo when: analytical formula is unavailable or intractable; working in high-dimensional spaces (\(n > 6\)); dealing with complex, irregular geometric regions; an approximate solution with quantified uncertainty is acceptable; validation of analytical results is needed; or computational time is not critical.

Cases to avoid

✘ AVOID Monte Carlo when: a closed-form solution exists (2D, 3D simple cones); high precision is required (< 0.01% error); fast repeated computations are needed; or low sample sizes are constrained by resources.

Sample size selection guidelines

The following table provides approximate sample sizes needed to achieve different precision targets:

| Target | Relative error | \(N\) samples |

|---|---|---|

| Quick estimate | 10% | 1,000 |

| Rough approximation | 1% | 10,000 |

| Moderate precision | 0.1% | 1,000,000 |

| High precision | 0.01% | 100,000,000 |

| Very high precision | 0.001% | 10,000,000,000 |

Note: Sample sizes are approximate and depend on the solid angle magnitude. For smaller solid angles (\(p \ll 0.5\)), larger \(N\) required.

Error estimation best practices

practice_table <- data.frame(

practice = c("Report uncertainty",

"Multiple runs for critical applications",

"Convergence diagnostics"),

description = c("include binomial standard error and 95% CI; report as Omega = est +/- SE",

"perform k independent runs; report mean +/- SD across runs",

"plot estimate vs. N; verify SE decreases as N^-1/2; check across seeds")

)

knitr::kable(practice_table, caption = "Error-estimation best practices.")| practice | description |

|---|---|

| Report uncertainty | include binomial standard error and 95% CI; report as Omega = est +/- SE |

| Multiple runs for critical applications | perform k independent runs; report mean +/- SD across runs |

| Convergence diagnostics | plot estimate vs. N; verify SE decreases as N^-1/2; check across seeds |

#### Demonstration: Multiple independent runs ####

n_runs <- 10

n_samples_run <- 50000

estimates <- numeric(n_runs)

for (i in 1:n_runs) {

result <- solid_angle_monte_carlo(orthant_test_3d, n_samples = n_samples_run, seed = NULL)

estimates[i] <- result$estimate

}

multirun_table <- data.frame(

quantity = c("Mean (sr)",

"SD (sr)",

"SE theoretical (sr)",

"Ratio SD/SE",

"Min (sr)",

"Max (sr)"),

value = c(sprintf("%.6f", mean(estimates)),

sprintf("%.6f", sd(estimates)),

sprintf("%.6f", result$std_error),

sprintf("%.4f", sd(estimates) / result$std_error),

sprintf("%.6f", min(estimates)),

sprintf("%.6f", max(estimates)))

)

knitr::kable(multirun_table, caption = "Ten independent runs at N = 50,000.")| quantity | value |

|---|---|

| Mean (sr) | 1.570319 |

| SD (sr) | 0.020559 |

| SE theoretical (sr) | 0.018624 |

| Ratio SD/SE | 1.1039 |

| Min (sr) | 1.539380 |

| Max (sr) | 1.600956 |

Advanced topics

Variance reduction techniques

While not implemented in the basic

solid_angle_monte_carlo() function, several variance

reduction techniques can improve efficiency:

Stratified sampling

Divide the sphere into strata and sample proportionally from each. For regions with known structure (e.g., symmetric), this can reduce variance significantly.

Expected improvement: Factor of 2-10 reduction in variance

Importance sampling

Sample more densely in regions where the integrand varies most. Requires prior knowledge of the region geometry.

Expected improvement: Factor of 10-100 for highly concentrated regions

Antithetic variates

For each sample \(\mathbf{x}\), also evaluate at \(-\mathbf{x}\). Reduces variance by inducing negative correlation.

Expected improvement: Factor of 2 for symmetric regions

Control variates

Use a related function with known integral to reduce variance. For solid angles, a simpler approximating cone can serve as control.

Expected improvement: Highly problem-dependent, factor of 2-5 typical

#### Simple demonstration: antithetic variates ####

set.seed(42)

n_samples <- 10000

#### Standard MC ####

result_standard <- solid_angle_monte_carlo(orthant_test_3d, n_samples = n_samples, seed = 42)

#### Antithetic MC (manual implementation) ####

points <- matrix(rnorm(3 * (n_samples/2)), ncol = 3)

norms <- sqrt(rowSums(points^2))

points <- points / norms

#### Evaluate at x and -x ####

in_region_pos <- apply(points, 1, orthant_test_3d)

in_region_neg <- apply(-points, 1, orthant_test_3d)

#### Average the two ####

fraction_inside <- mean(c(in_region_pos, in_region_neg))

estimate_antithetic <- 4 * pi * fraction_inside

variance_table <- data.frame(

method = c("Standard MC",

"Antithetic MC"),

estimate_sr = c(sprintf("%.6f", result_standard$estimate),

sprintf("%.6f", estimate_antithetic)),

se_sr = c(sprintf("%.6f", result_standard$std_error),

NA_character_)

)

knitr::kable(

variance_table,

col.names = c("method", "estimate (sr)", "SE (sr)"),

caption = "Standard vs. antithetic Monte Carlo (orthant; improvement modest for asymmetric regions)."

)| method | estimate (sr) | SE (sr) |

|---|---|---|

| Standard MC | 1.554460 | 0.041373 |

| Antithetic MC | 1.579593 | NA |

Adaptive sampling

For complex regions with varying density, adaptive sampling can improve efficiency by concentrating samples where needed.

adaptive_table <- data.frame(

step = c("1", "2", "3", "4",

"Requirement a", "Requirement b", "Requirement c",

"Expected speedup"),

description = c("initial coarse sampling (N = 1,000)",

"identify high-variance regions",

"refine sampling in those regions",

"iterate until convergence",

"region subdivision strategy",

"variance estimation per subregion",

"sample allocation algorithm",

"5-20x for highly irregular regions")

)

knitr::kable(adaptive_table, caption = "Adaptive sampling concept and requirements.")| step | description |

|---|---|

| 1 | initial coarse sampling (N = 1,000) |

| 2 | identify high-variance regions |

| 3 | refine sampling in those regions |

| 4 | iterate until convergence |

| Requirement a | region subdivision strategy |

| Requirement b | variance estimation per subregion |

| Requirement c | sample allocation algorithm |

| Expected speedup | 5-20x for highly irregular regions |

Validation case study: polyhedral cones

We conclude with a comprehensive validation comparing Monte Carlo estimates with analytical formulas for several polyhedral cones.

#### Comprehensive validation study ####

validation_cases <- list(

list(

name = "Regular tetrahedron face",

V = cbind(c(1,0,0), c(0,1,0), c(0,0,1)),

method = "formula"

),

list(

name = "60-60-60 deg spherical triangle",

V = cbind(

c(1, 0, 0),

c(0.5, sqrt(3)/2, 0),

c(0.5, sqrt(3)/6, sqrt(6)/3)

),

method = "formula"

),

list(

name = "Wide cone (120 deg opening)",

V = cbind(c(1,0,0), c(-0.5,sqrt(3)/2,0), c(-0.5,-sqrt(3)/2,0)),

method = "formula"

)

)

n_mc_validation <- 500000

validation_rows <- data.frame(

case = character(),

analytical_sr = numeric(),

mc_sr = numeric(),

se_sr = numeric(),

abs_error = numeric(),

rel_error_percent = numeric(),

z_score = numeric(),

assessment = character(),

stringsAsFactors = FALSE

)

for (test_case in validation_cases) {

V <- test_case$V

V <- normalize_vectors(V)

#### Analytical ####

omega_analytical <- solid_angle_3d(V[,1], V[,2], V[,3]) * 4 * pi

#### Monte Carlo ####

cone_test_val <- function(point) {

coeffs <- tryCatch(

solve(V, point),

error = function(e) return(c(-1, -1, -1))

)

all(coeffs >= -1e-10) # Small tolerance for numerical errors

}

result_mc <- solid_angle_monte_carlo(cone_test_val, n_samples = n_mc_validation, seed = 42)

#### Statistics ####

abs_error <- abs(result_mc$estimate - omega_analytical)

rel_error <- abs_error / omega_analytical

z_score <- abs_error / result_mc$std_error

assessment <- ifelse(z_score < 3, "excellent agreement",

ifelse(z_score < 5, "acceptable", "poor agreement"))

validation_rows <- rbind(validation_rows, data.frame(

case = test_case$name,

analytical_sr = omega_analytical,

mc_sr = result_mc$estimate,

se_sr = result_mc$std_error,

abs_error = abs_error,

rel_error_percent = rel_error * 100,

z_score = z_score,

assessment = assessment,

stringsAsFactors = FALSE

))

}

knitr::kable(

validation_rows,

digits = c(NA, 8, 8, 8, 8, 4, 2, NA),

col.names = c("case", "analytical (sr)", "MC (sr)", "SE (sr)",

"abs. error", "rel. error (%)", "z-score", "assessment"),

caption = "Comprehensive validation study (N = 500,000)."

)| case | analytical (sr) | MC (sr) | SE (sr) | abs. error | rel. error (%) | z-score | assessment |

|---|---|---|---|---|---|---|---|

| Regular tetrahedron face | 1.5707963 | 1.5688360 | 0.00587424 | 0.00196035 | 0.1248 | 0.33 | excellent agreement |

| 60-60-60 deg spherical triangle | 0.5512856 | 0.5463104 | 0.00362400 | 0.00497520 | 0.9025 | 1.37 | excellent agreement |

| Wide cone (120 deg opening) | 0.0000000 | 0.0000000 | 0.00000000 | 0.00000000 | NaN | NaN | NA |

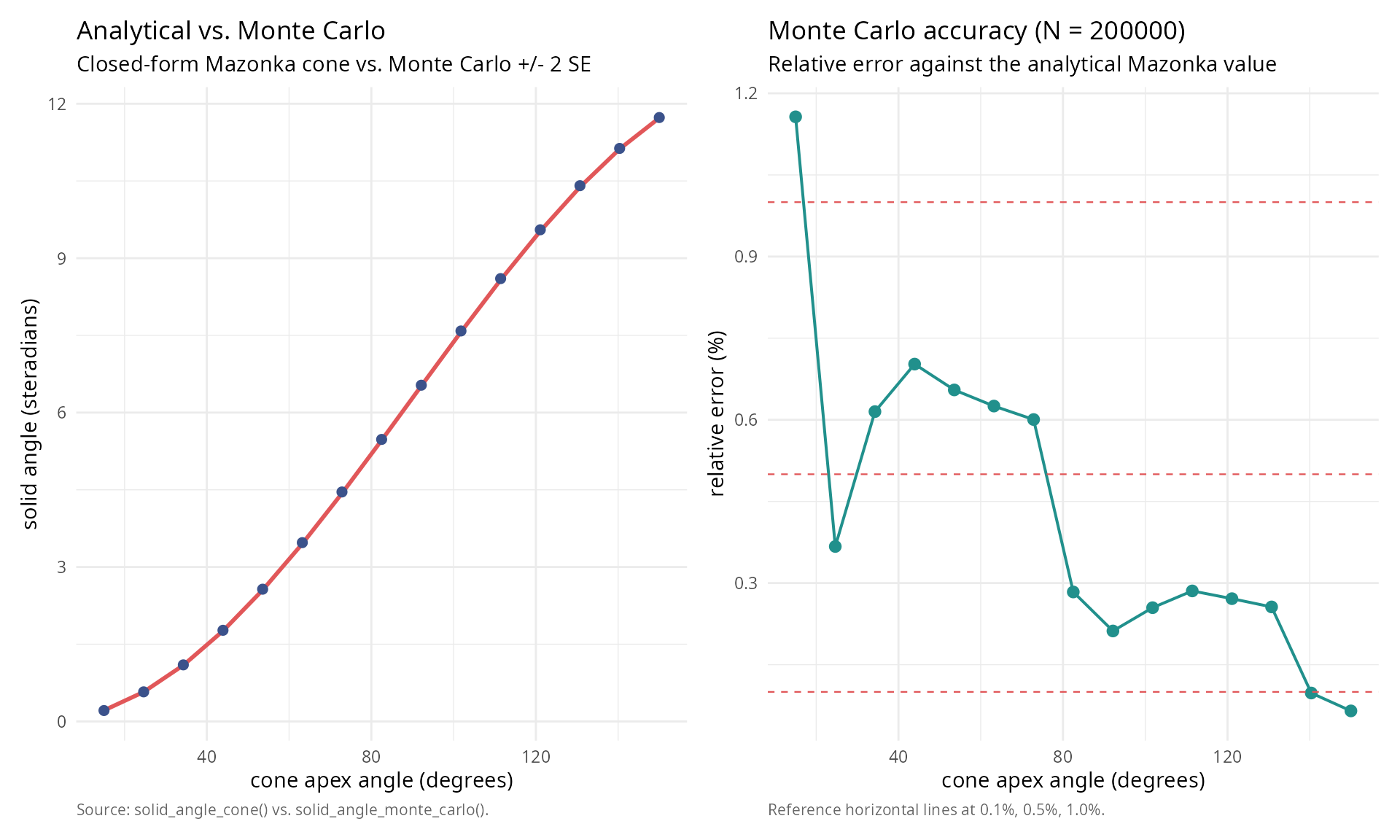

#### Final comparison plot: Analytical vs. MC ####

theta_range <- seq(pi/12, 5*pi/6, length.out = 15)

n_mc_final <- 200000

analytical_values <- numeric(length(theta_range))

mc_values <- numeric(length(theta_range))

mc_errors <- numeric(length(theta_range))

for (i in seq_along(theta_range)) {

theta <- theta_range[i]

#### Analytical ####

analytical_values[i] <- solid_angle_cone(theta) * 4 * pi

#### Monte Carlo ####

cone_test_final <- function(point) point[3] >= cos(theta)

result <- solid_angle_monte_carlo(cone_test_final, n_samples = n_mc_final, seed = 42)

mc_values[i] <- result$estimate

mc_errors[i] <- result$std_error

}

library(ggplot2); library(patchwork)

df_compare <- data.frame(

theta_deg = theta_range * 180 / pi,

analytical = analytical_values,

mc = mc_values,

mc_lo = mc_values - 2 * mc_errors,

mc_hi = mc_values + 2 * mc_errors

)

theme_v <- theme_minimal(base_size = 11) +

theme(plot.caption = element_text(color = "grey40", size = 8, hjust = 0))

p_left <- ggplot(df_compare, aes(theta_deg)) +

geom_line(aes(y = analytical), colour = "#E15759", linewidth = 1) +

geom_errorbar(aes(ymin = mc_lo, ymax = mc_hi),

width = 0, colour = "#3B528B") +

geom_point(aes(y = mc), colour = "#3B528B", size = 2) +

labs(title = "Analytical vs. Monte Carlo",

subtitle = "Closed-form Mazonka cone vs. Monte Carlo +/- 2 SE",

x = "cone apex angle (degrees)",

y = "solid angle (steradians)",

caption = "Source: solid_angle_cone() vs. solid_angle_monte_carlo().") +

theme_v

rel_errors <- abs(mc_values - analytical_values) / analytical_values * 100

df_rel <- data.frame(theta_deg = df_compare$theta_deg, rel_err_pct = rel_errors)

p_right <- ggplot(df_rel, aes(theta_deg, rel_err_pct)) +

geom_line(colour = "#21908C", linewidth = 0.7) +

geom_point(colour = "#21908C", size = 2.4) +

geom_hline(yintercept = c(0.1, 0.5, 1.0), colour = "#E15759",

linetype = 2, linewidth = 0.4) +

labs(title = sprintf("Monte Carlo accuracy (N = %g)", n_mc_final),

subtitle = "Relative error against the analytical Mazonka value",

x = "cone apex angle (degrees)",

y = "relative error (%)",

caption = "Reference horizontal lines at 0.1%, 0.5%, 1.0%.") +

theme_v

p_left + p_right

Conclusions

This comprehensive analysis of Monte Carlo methods for solid angle computation reveals several key findings:

Main results

Monte Carlo provides unbiased estimates with \(O(N^{-1/2})\) convergence, confirmed empirically; standard errors from binomial statistics accurately predict empirical variability across sample sizes; 95% confidence intervals achieve nominal coverage, validating the statistical framework; for circular and simplicial cones, MC estimates agree with analytical values to within \(3\sigma\) consistently; and unlike hypergeometric series (exponential in dimension), MC maintains \(O(N)\) complexity regardless of dimension.

Practical recommendations

Sample size selection

For quick verification, use \(N = 10^4\) (1% accuracy); for standard analysis, use \(N = 10^5\) (0.3% accuracy); for high precision, use \(N = 10^6\) (0.1% accuracy); and for publication quality, use \(N \geq 10^7\) (< 0.01% accuracy).

Method selection criteria

| Scenario | Recommended method | Reason |

|---|---|---|

| 2D/3D simple cones | Analytical formula | Exact, instantaneous |

| 3D polyhedral (< 5 faces) | Van Oosterom | Exact, fast |

| nD orthants (n ≤ 6) | Hypergeometric series | Exact, feasible |

| High dimensions (n > 6) | Monte Carlo | Only tractable option |

| Complex regions | Monte Carlo | Only available method |

| Validation/verification | Monte Carlo | Independent check |

Future directions

Extensions to the Monte Carlo implementation could include higher-dimensional sphere sampling (\(S^{n-1}\) for arbitrary \(n\)); stratified sampling with automatic stratum determination; importance sampling with adaptive proposal distributions; parallel computation for massive sample sizes; and quasi-Monte Carlo using low-discrepancy sequences.

The Monte Carlo approach, while conceptually simple, provides a robust, reliable, and theoretically sound method for solid angle estimation, particularly valuable for high-dimensional and geometrically complex problems where analytical methods fail or become intractable.

References

[1] Marsaglia, G. (1972). Choosing a point from the surface of a sphere. Annals of Mathematical Statistics, 43(2), 645-646. DOI: 10.1214/aoms/1177692644

[2] Arvo, J. (2001). Stratified sampling of spherical triangles. Proceedings of ACM SIGGRAPH 2001, 437-438. DOI: 10.1145/383259.383300

[3] Ribando, J. M. (2006). Measuring solid angles beyond dimension three. Discrete & Computational Geometry, 36(3), 479-487. DOI: 10.1007/s00454-006-1253-4

[4] Van Oosterom, A., & Strackee, J. (1983). The solid angle of a plane triangle. IEEE Transactions on Biomedical Engineering, BME-30(2), 125-126. DOI: 10.1109/TBME.1983.325207

[5] Robert, C. P., & Casella, G. (2004). Monte Carlo statistical methods (2nd ed.). Springer. DOI: 10.1007/978-1-4757-4145-2

[6] Pharr, M., Jakob, W., & Humphreys, G. (2016). Physically based rendering: from theory to implementation (3rd ed.). Morgan Kaufmann. ISBN: 978-0-12-800645-0

Session information

sessionInfo()

#> R version 4.6.0 (2026-04-24)

#> Platform: x86_64-pc-linux-gnu

#> Running under: Ubuntu 24.04.4 LTS

#>

#> Matrix products: default

#> BLAS: /usr/lib/x86_64-linux-gnu/openblas-pthread/libblas.so.3

#> LAPACK: /usr/lib/x86_64-linux-gnu/openblas-pthread/libopenblasp-r0.3.26.so; LAPACK version 3.12.0

#>

#> locale:

#> [1] LC_CTYPE=es_ES.UTF-8 LC_NUMERIC=C

#> [3] LC_TIME=es_ES.UTF-8 LC_COLLATE=es_ES.UTF-8

#> [5] LC_MONETARY=es_ES.UTF-8 LC_MESSAGES=es_ES.UTF-8

#> [7] LC_PAPER=es_ES.UTF-8 LC_NAME=C

#> [9] LC_ADDRESS=C LC_TELEPHONE=C

#> [11] LC_MEASUREMENT=es_ES.UTF-8 LC_IDENTIFICATION=C

#>

#> time zone: Europe/Madrid

#> tzcode source: system (glibc)

#>

#> attached base packages:

#> [1] stats graphics grDevices utils datasets methods base

#>

#> other attached packages:

#> [1] microbenchmark_1.5.0 patchwork_1.3.2 gridExtra_2.3

#> [4] ggplot2_4.0.3 SolidAngleR_0.6.0

#>

#> loaded via a namespace (and not attached):

#> [1] sandwich_3.1-1 sass_0.4.10 generics_0.1.4 lattice_0.22-9

#> [5] digest_0.6.39 magrittr_2.0.5 evaluate_1.0.5 grid_4.6.0

#> [9] RColorBrewer_1.1-3 mvtnorm_1.3-7 fastmap_1.2.0 jsonlite_2.0.0

#> [13] Matrix_1.7-5 survival_3.8-6 multcomp_1.4-30 scales_1.4.0

#> [17] TH.data_1.1-5 codetools_0.2-20 textshaping_1.0.5 jquerylib_0.1.4

#> [21] cli_3.6.6 rlang_1.2.0 splines_4.6.0 withr_3.0.2

#> [25] cachem_1.1.0 yaml_2.3.12 otel_0.2.0 tools_4.6.0

#> [29] dplyr_1.2.1 vctrs_0.7.3 R6_2.6.1 zoo_1.8-15

#> [33] lifecycle_1.0.5 fs_2.1.0 htmlwidgets_1.6.4 MASS_7.3-65

#> [37] ragg_1.5.2 pkgconfig_2.0.3 desc_1.4.3 pkgdown_2.2.0

#> [41] pillar_1.11.1 bslib_0.10.0 gtable_0.3.6 glue_1.8.1

#> [45] Rcpp_1.1.1-1.1 systemfonts_1.3.2 xfun_0.57 tibble_3.3.1

#> [49] tidyselect_1.2.1 rstudioapi_0.18.0 knitr_1.51 farver_2.1.2

#> [53] htmltools_0.5.9 rmarkdown_2.31 labeling_0.4.3 compiler_4.6.0

#> [57] S7_0.2.2